Chapter 8: Vision-Language-Action Models — See, Speak, Act

Overview

VLA (Vision-Language-Action) models combine large vision-language models (VLMs) with robot actions to pursue general-purpose robot control that sees, understands, and acts. This paradigm, which began with RT-2 in 2023, is now adopted as the "brain" of every major humanoid robot. This chapter covers the VLA lineage, pi0 [#2]'s Flow Matching approach, post-deployment improvement, tactile integration, and scaling strategies.

After reading this chapter, you will be able to... - Trace the key inflection points in the VLA lineage from RT-1 to Gemini Robotics. - Understand pi0's VLM + Flow Matching architecture. - Describe how tactile sensing is integrated into VLAs (ForceVLA [#1], Tactile-VLA). - Explain the cross-embodiment data strategy of Open X-Embodiment.

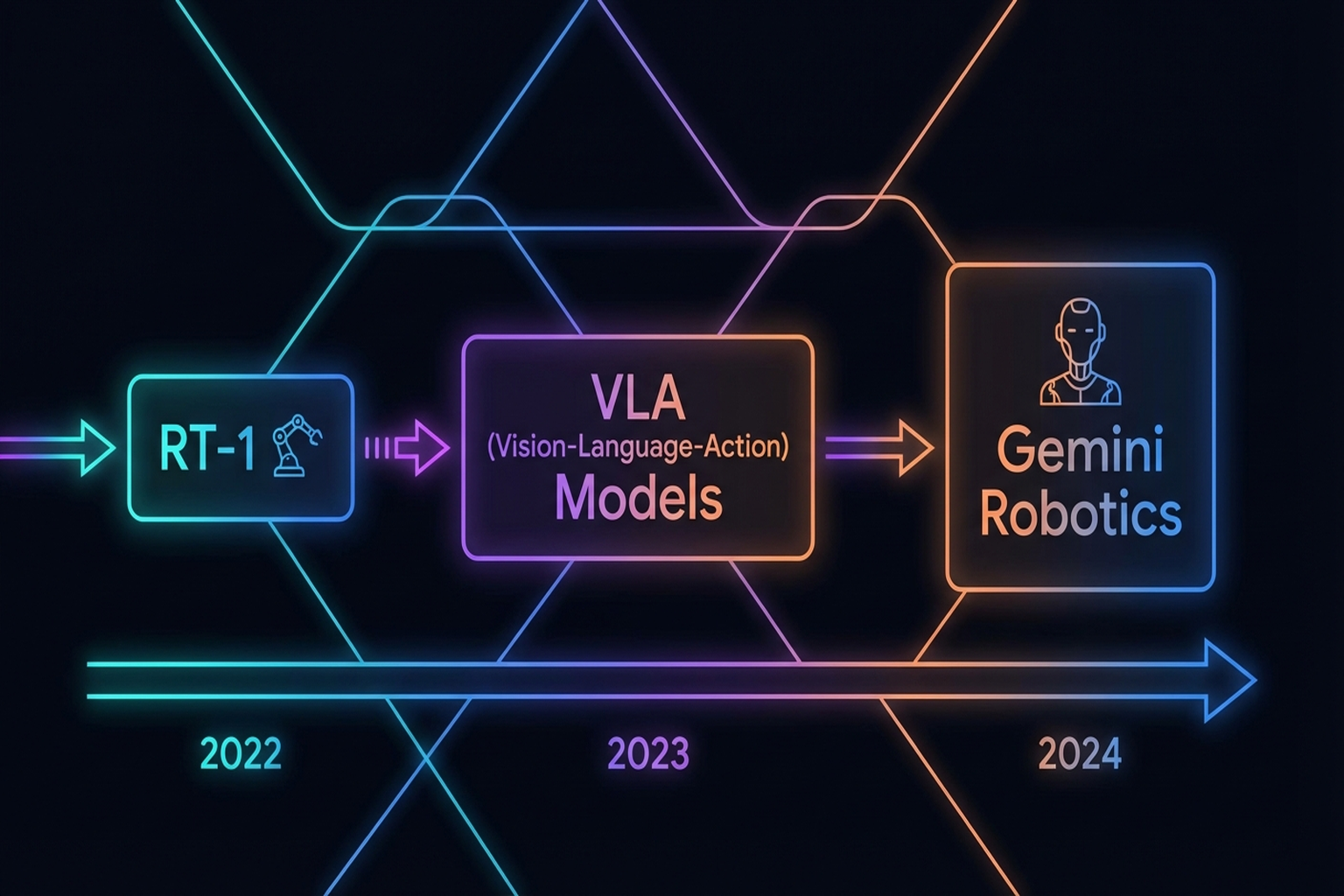

8.1 The VLA Lineage: From RT-1 to Gemini Robotics

The evolution of VLA models reflects a paradigm shift in robot learning:

RT-1 (2023)

Google / Everyday Robots' RT-1 [2] was the first large-scale real-world robot Transformer:

- 130K episodes from 13 robots

- Proved the feasibility of large-scale Transformers for real-world control

- 800+ citations (RSS 2023)

RT-2 (2023)

Google DeepMind's RT-2 [2] established the VLA paradigm:

- Fine-tuned large VLMs (PaLI-X, PaLM-E) on robot demonstration data

- Transferred web knowledge to robotic control

- Could execute commands like "pick up the apple"

Key Paper: Brohan, A., Brown, N., et al. (2023). "RT-2: Vision-Language-Action Models Transfer Web Knowledge to Robotic Control." CoRL 2023. The landmark paper establishing the VLA paradigm. First demonstrated that vision-language knowledge from large VLMs can transfer to robot actions.

OpenVLA (2024)

Stanford/DeepMind's OpenVLA [3] launched open-source VLAs:

- 7B parameters — 1/10 the size of RT-2-X

- Trained on Open X-Embodiment

- Outperformed RT-2-X

- Fully open-source: weights, code, data

Octo (2024)

UC Berkeley's Octo[4] is a generalist robot policy:

- Transformer-based diffusion policy

- Pretrained on 800K episodes

- Flexible task/observation definitions

- Quick finetuning support

8.2 pi0: Vision-Language Models Meet Flow Matching

Physical Intelligence's pi0 [Black et al., 2024] represents a current state of the art:

- PaLiGemma 3B VLM backbone

- Flow Matching action expert: Action generation via continuous normalizing flows

- 7 robots, 68 tasks, 10,000+ hours of data

- Pre-training → post-training two-stage recipe

Key Paper: Physical Intelligence. (2024). "pi0: A Vision-Language-Action Flow Model for General Robot Control." arXiv:2410.24164. PaLiGemma VLM + Flow Matching for general robot control across 8 embodiments. The two-stage pre-training/post-training recipe is the key innovation.

pi0's core innovation is applying Flow Matching [8] to action generation. Unlike standard diffusion models, flow matching learns continuous normalizing flows without simulation, enabling more efficient action generation.

8.3 pi0.6/RECAP: Continuous Improvement Through Post-Deployment RL

pi0.6[15] [#4] and RECAP realize continuous improvement via post-deployment reinforcement learning:

- Deploy initial pi0 → collect failure/success data

- Fine-tune with RL on collected data

- Deployment-learning-improvement data flywheel

This approach overcomes VLA's fundamental limitation — failure outside the demonstration data distribution — through continuous post-deployment learning.

pi0 Human-to-Robot Transfer (2025)

Physical Intelligence [Dec 2025] applied human co-finetuning to pi0:

- 2x performance improvement across 4 generalization scenarios

- Emergent alignment: Joint human/robot training produces natural alignment without explicit retargeting

- Consistent with the co-training approaches (EgoMimic, EgoScale) discussed in Chapter 10.6

EgoVLA (2025)

EgoVLA [arXiv Jul 2025] is a VLA pretrained on egocentric human videos then fine-tuned for robots:

- Learns action representations from MANO [#17] hand parameters in human egocentric video

- Resolves human hand → robot hand representation alignment within the VLA framework

- Integrates Chapter 6.1's MANO model with Chapter 10's retargeting approaches in a VLA context

PhysBrain (2025)

PhysBrain [arXiv Dec 2025] fine-tunes VLMs for the physical world using large-scale VQA data:

- Generates 3M VQA pairs from Ego4D/BuildAI

- VLM fine-tune → 53.9% SimplerEnv success

- Demonstrates that human egocentric video is effective for teaching VLAs physical common sense

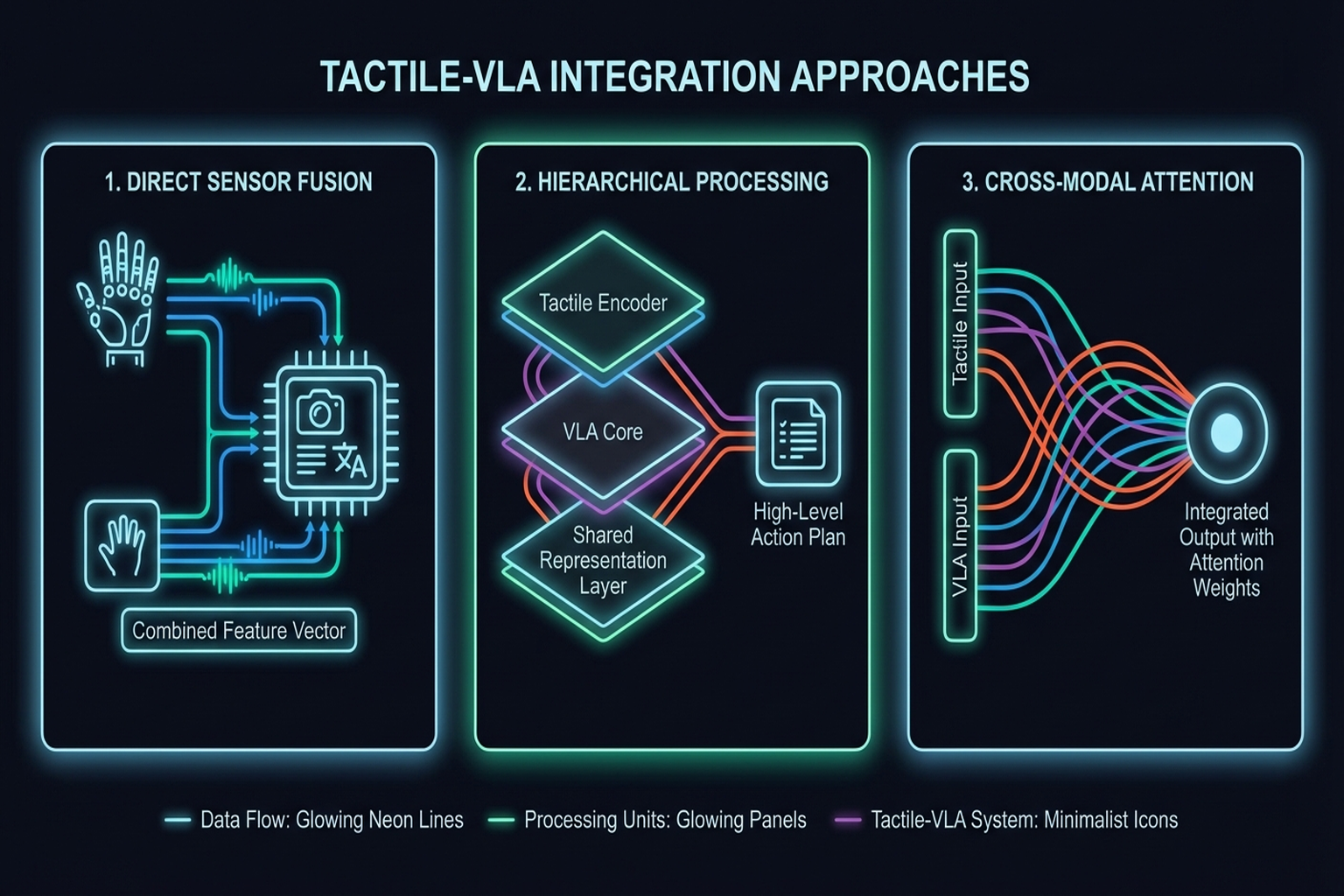

8.4 Tactile Integration: ForceVLA and Tactile-VLA

Integrating tactile/force information as a "first-class modality" in VLAs is an emerging direction.

ForceVLA (2025)

Yu et al.[6] (→ introduced in Chapter 7.4):

- FVLMoE: Dynamic routing across 4 experts

- Force sensor integration into pi0-based VLA

- +23.2 percentage points over force-free baseline

- 90% success under visual occlusion

Tactile-VLA (2025)

Tactile-VLA[10] unlocks VLA's physical knowledge through tactile sensing:

- Pretrained vision-language knowledge contributes to tactile generalization

- Improved generalization on contact-rich tasks

Challenges of Tactile Integration

The key challenge is temporal resolution mismatch:

- Vision: ~30 Hz

- Force/tactile: 100-1,000 Hz

- Transformer latency: Real-time constraints during inference

ForceVLA's MoE approach partially addresses this through dynamic routing, but a fundamental solution remains an open problem (→ Chapter 13.1).

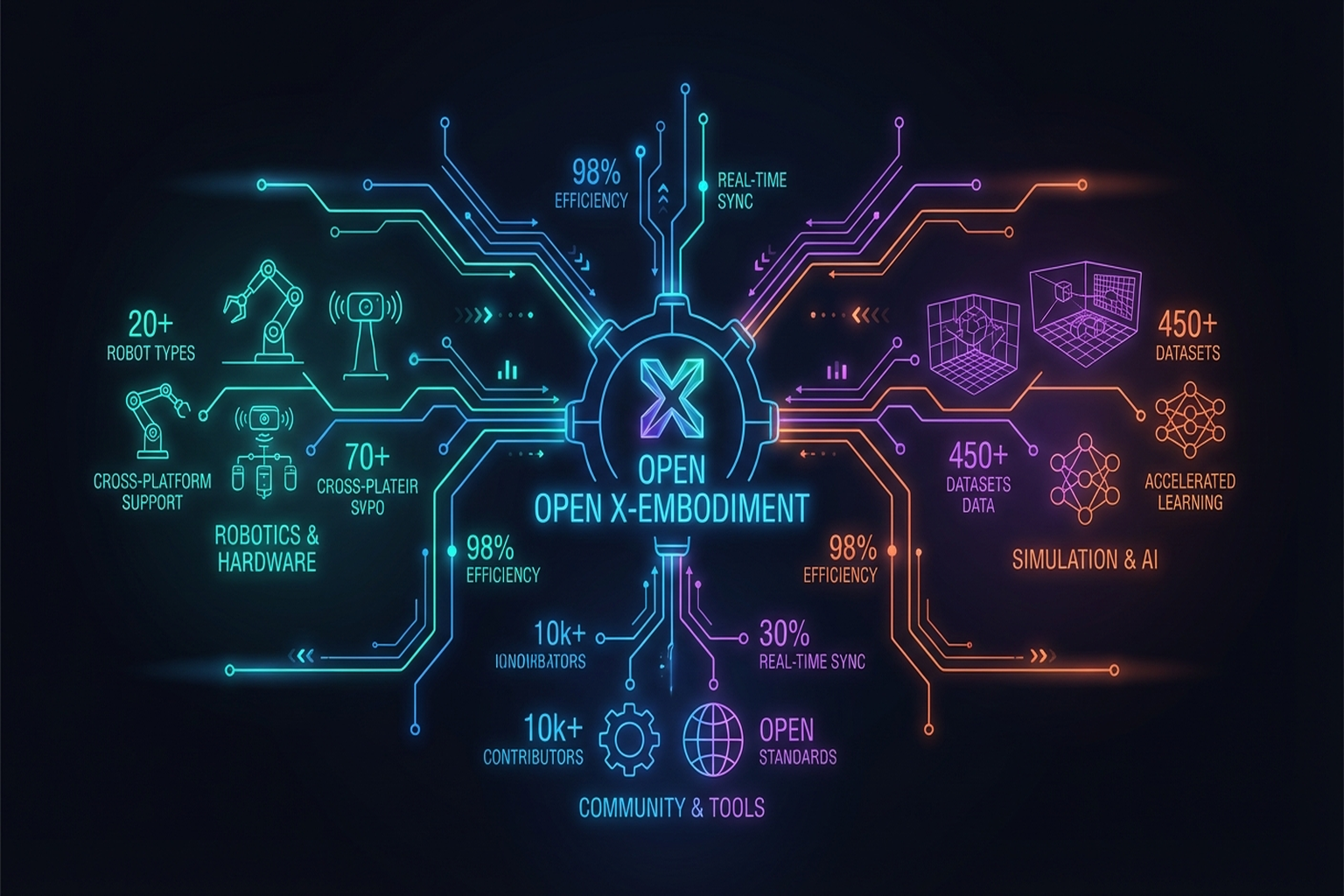

8.5 Scaling and Data: Open X-Embodiment

VLA performance strongly depends on data scale. Open X-Embodiment [2024, ICRA] is the key solution:

- 1M+ trajectories from 34 laboratories

- 22 embodiments: Diverse robot forms

- RT-1-X: 50% improvement via cross-embodiment training

- RT-2-X: 3x performance improvement

- 300+ citations

Key Paper: Open X-Embodiment Collaboration. (2024). "Open X-Embodiment: Robotic Learning Datasets and RT-X Models." ICRA 2024. The largest open-source robot dataset from 34 labs with 1M+ trajectories. Demonstrated the power of cross-embodiment learning.

NVIDIA's synthetic data pipeline plays a critical role: 780K trajectories (6,500 hours equivalent) generated in 11 hours, improving real performance by 40%. GR00T N1 [2025] is the world's first open humanoid foundation model applying cross-embodiment VLA to manipulation. GR00T N1.6[17] added reasoning capabilities via Cosmos Reason.

8.6 Limitations and Outlook for VLAs

Current Limitations

The VLA Systematic Review [2026, Information Fusion] analyzed 102 models, 26 datasets, and 12 simulation platforms to identify:

- Insufficient cross-task generalization: Analysis of 164 VLA papers at ICLR 2026 shows cross-task generalization remains inadequate

- Long-horizon task failure: Error compounding in multi-step tasks beyond 5-30 seconds

- Open-world objects: Failure on objects absent from training data

- Material properties not captured: Vision alone cannot infer friction, compliance — the case for tactile integration

Architecture Design Principles

"What Matters in Building VLA Models" [2025, Nature Machine Intelligence] finds through systematic analysis:

- Hierarchical/late fusion architectures achieve best generalization

- Diffusion decoders are optimal for action generation

- These principles align with ForceVLA's MoE architecture

Gemini Robotics (2025)

Google DeepMind's Gemini Robotics[6] is a VLA family built on Gemini 2.0:

- Gemini Robotics-ER: Embodied reasoning including spatial understanding and grasp prediction

- Precision control for dexterous manipulation

- The most ambitious attempt toward a "universal robot brain"

Outlook: First of Eight Industry Trends

As detailed in Chapter 12, "VLA as Standard Brain" is the first of eight industry trends. Every major humanoid — Figure's Helix, NVIDIA's GR00T, Google's Gemini, Physical Intelligence's pi0 — has adopted VLA architecture.

Summary and Outlook

VLA models have rapidly evolved from RT-1's feasibility proof to pi0's general control and Gemini Robotics' embodied reasoning. Open X-Embodiment's cross-embodiment data and NVIDIA's synthetic data address scale; ForceVLA and Tactile-VLA integrate touch as a first-class modality; pi0.6/RECAP enables continuous post-deployment improvement.

However, key challenges remain: long-horizon tasks, open-world generalization, and vision-tactile temporal alignment. These are systematically addressed in Chapter 13.

The next chapter examines Sim-to-Real transfer — bringing VLA and RL policies from simulation to reality (→ Chapter 9).

References

- Brohan, A., Brown, N., et al. (2023). RT-1: Robotics Transformer for real-world control at scale. RSS 2023. arXiv:2212.06817. scholar

- Brohan, A., Brown, N., et al. (2023). RT-2: Vision-Language-Action models transfer web knowledge to robotic control. CoRL 2023. arXiv:2307.15818. scholar

- Kim, M. J., Pertsch, K., Karamcheti, S., et al. (2024). OpenVLA: An open-source Vision-Language-Action model. arXiv:2406.09246. scholar

- Octo Model Team. (2024). Octo: An open-source generalist robot policy. arXiv:2405.12213. scholar

- Physical Intelligence. (2024). pi0: A Vision-Language-Action flow model for general robot control. arXiv:2410.24164. #2 scholar

- Google DeepMind. (2025). Gemini Robotics: Bringing AI into the physical world. arXiv:2503.20020. scholar

- Open X-Embodiment Collaboration. (2024). Open X-Embodiment: Robotic learning datasets and RT-X models. ICRA 2024. arXiv:2310.08864. scholar

- Lipman, Y., Chen, R. T. Q., Ben-Hamu, H., Nickel, M., & Le, M. (2023). Flow matching for generative modeling. ICLR 2023. arXiv:2210.02747. scholar

- Yu, J., Liu, H., Yu, Q., et al. (2025). ForceVLA: Enhancing VLA models with a force-aware MoE for contact-rich manipulation. NeurIPS 2025. #1 scholar

- Huang, J., Wang, S., Lin, F., Hu, Y., Wen, C., & Gao, Y. (2025). Tactile-VLA: Unlocking vision-language-action model's physical knowledge for tactile generalization. OpenReview. scholar

- Helmut, E., Funk, N., Schneider, T., de Farias, C., & Peters, J. (2025). Tactile-conditioned diffusion policy for force-aware robotic manipulation. ICRA 2026. arXiv:2510.13324. scholar

- Various. (2026). VLA systematic review. Information Fusion (Elsevier). https://doi.org/10.1016/j.inffus.2025.103148. scholar

- Various. (2025). What matters in building VLA models. Nature Machine Intelligence. https://doi.org/10.1038/s42256-025-01168-7. scholar

- Various. (2025). Diffusion models for robotic manipulation survey. Frontiers. https://doi.org/10.3389/frobt.2025.1606247. scholar

- Various. (2025). pi0.6/RECAP: Post-deployment RL for continuous improvement. #4 scholar

- NVIDIA. (2025). GR00T N1: Open humanoid foundation model. scholar

- NVIDIA. (2026). GR00T N1.6: Added reasoning via Cosmos Reason. scholar

- Figure AI. (2025). Helix: VLA for full humanoid upper body (35 DoF). scholar

- Physical Intelligence. (2025). pi0 human-to-robot transfer: Human co-finetuning for generalization. Technical report. scholar

- Various. (2025). EgoVLA: Egocentric human video pretraining for robot VLA. arXiv preprint. scholar

- Various. (2025). PhysBrain: Physical world fine-tuning of VLMs via 3M VQA from Ego4D. arXiv preprint. scholar