Chapter 9: Sim-to-Real Transfer — From Virtual to Reality

Overview

Transferring policies learned in simulation to physical robots is a central challenge for tactile manipulation. Tactile sim-to-real is inherently harder than visual sim-to-real — due to gel deformation, multi-physics coupling, and contact model fidelity limitations. This chapter covers simulation engines, domain randomization, tactile sim-to-real, and Real-Sim-Real loops.

After reading this chapter, you will be able to... - Compare major tactile simulation engines (Isaac Gym, MuJoCo, Tacto, DiffTactile). - Explain DeXtreme's ADR approach. - Understand the unique challenges of tactile sim-to-real. - Describe the Real-Sim-Real loop concept and representative examples.

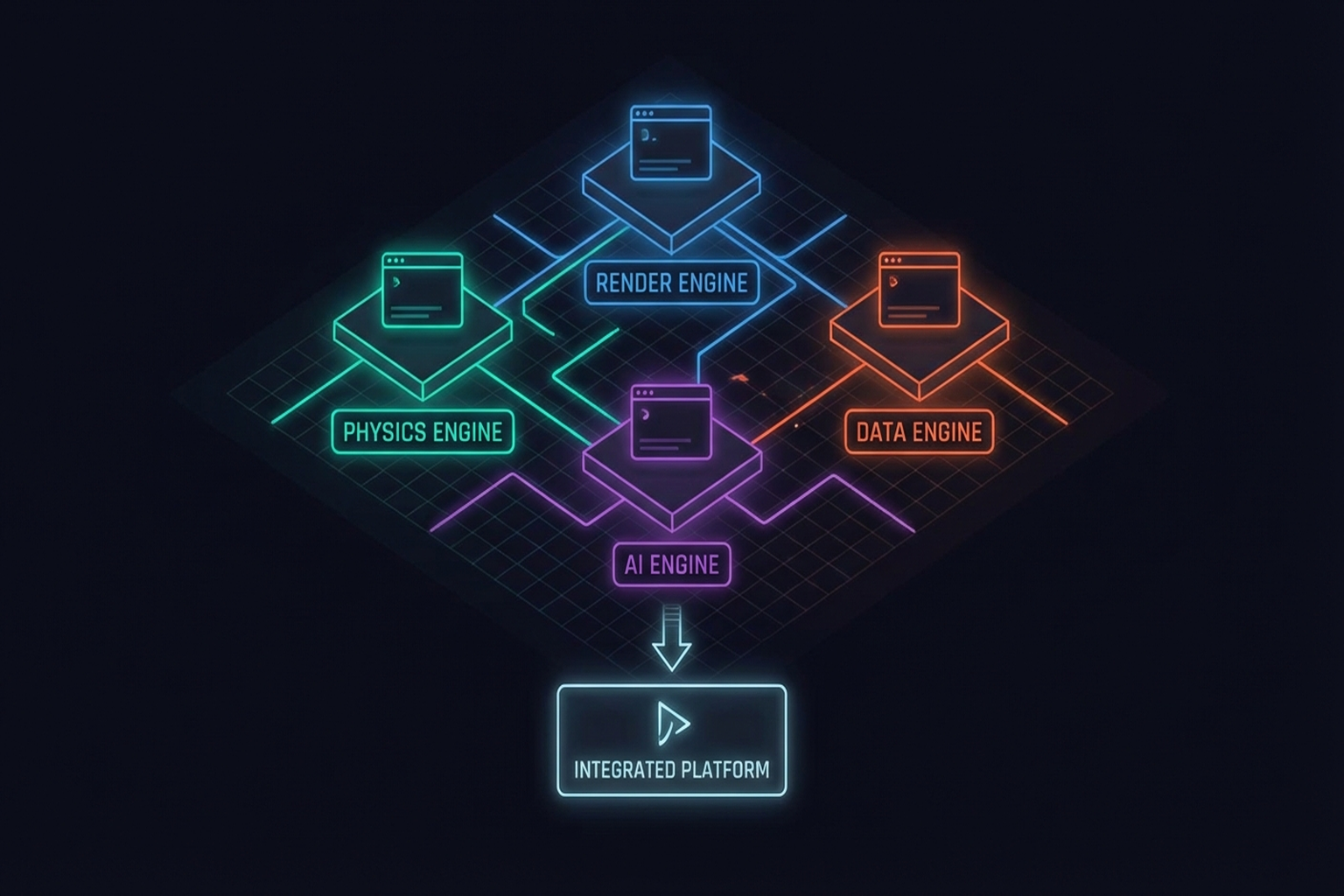

9.1 Simulation Engines: Isaac Gym/Lab, MuJoCo, Tacto, DiffTactile

Isaac Gym / Isaac Lab

NVIDIA's Isaac ecosystem is the standard for GPU-accelerated physics simulation: thousands of parallel environments, Newton physics engine, Omniverse digital twins. Core platform for DeXtreme[1] and GR00T[12].

MuJoCo

DeepMind's MuJoCo excels at contact-rich simulation. Used for ExoStart[6] [#9] dynamics filtering and OpenAI Dactyl [2].

Tacto (2022)

Meta FAIR's open-source simulator for vision-based tactile sensors (PyRender + PyBullet). Generates synthetic tactile images for GelSight/DIGIT. 150+ citations.

DiffTactile (2024)

Differentiable tactile simulator supporting gradient-based optimization with FEM-based deformation modeling.

TacEx (2024)

Integrates GelSight simulation into Isaac Sim for end-to-end research workflows (→ Chapter 11.2).

| Engine | GPU Accel. | Tactile | Differentiable | Primary Use | Representative |

|---|---|---|---|---|---|

| Isaac Gym/Lab | Yes | Indirect | No | Large-scale RL | DeXtreme, GR00T |

| MuJoCo | No | Indirect | No | Contact-rich sim | ExoStart, Dactyl |

| Tacto | No | Yes (vision) | No | Tactile image gen | DIGIT sim-to-real |

| DiffTactile | Partial | Yes | Yes | Gradient optim. | Contact optimization |

| TacEx | Yes | Yes (GelSight) | No | Integrated workflow | Research pipeline |

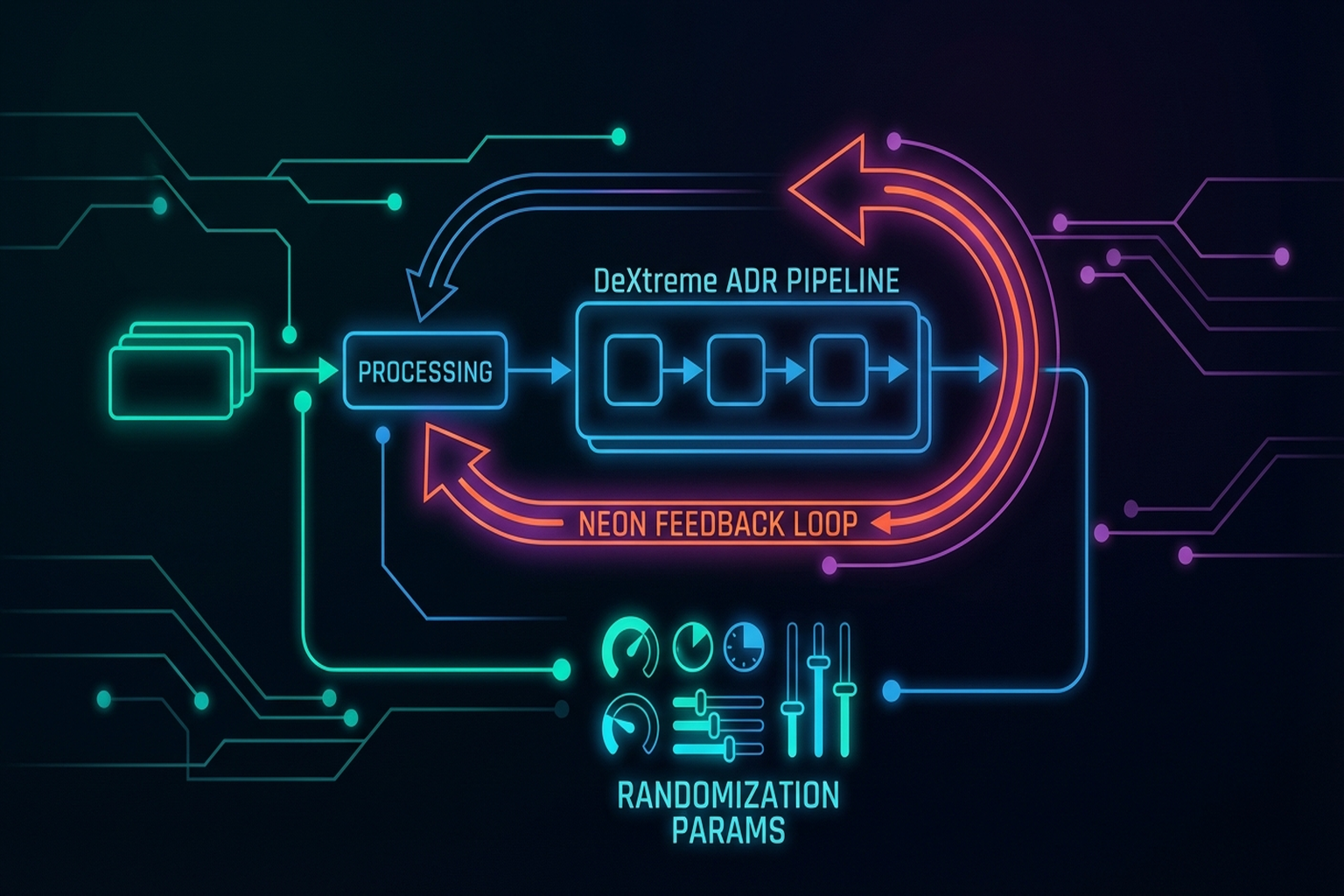

9.2 Domain Randomization: Automatic Domain Randomization in DeXtreme

Domain Randomization (DR) — randomly varying simulation physics parameters to make policies robust across conditions — is the most widely used sim-to-real strategy.

DeXtreme (2023)

Handa et al. [2023, NVIDIA] represents the state of the art in DR:

- Automatic Domain Randomization (ADR): Simultaneous physics + non-physics randomization

- Physics: friction, mass, joint stiffness, gravity

- Non-physics: lighting, camera position, texture, background

- Allegro Hand + Isaac Gym

- Omniverse Replicator for synthetic visual data

Key Paper: Handa, A., et al. (2023). "DeXtreme: Transfer of Agile In-hand Manipulation from Simulation to Reality." ICRA 2023. ADR with simultaneous physics/non-physics randomization is the key to sim-to-real dexterous manipulation.

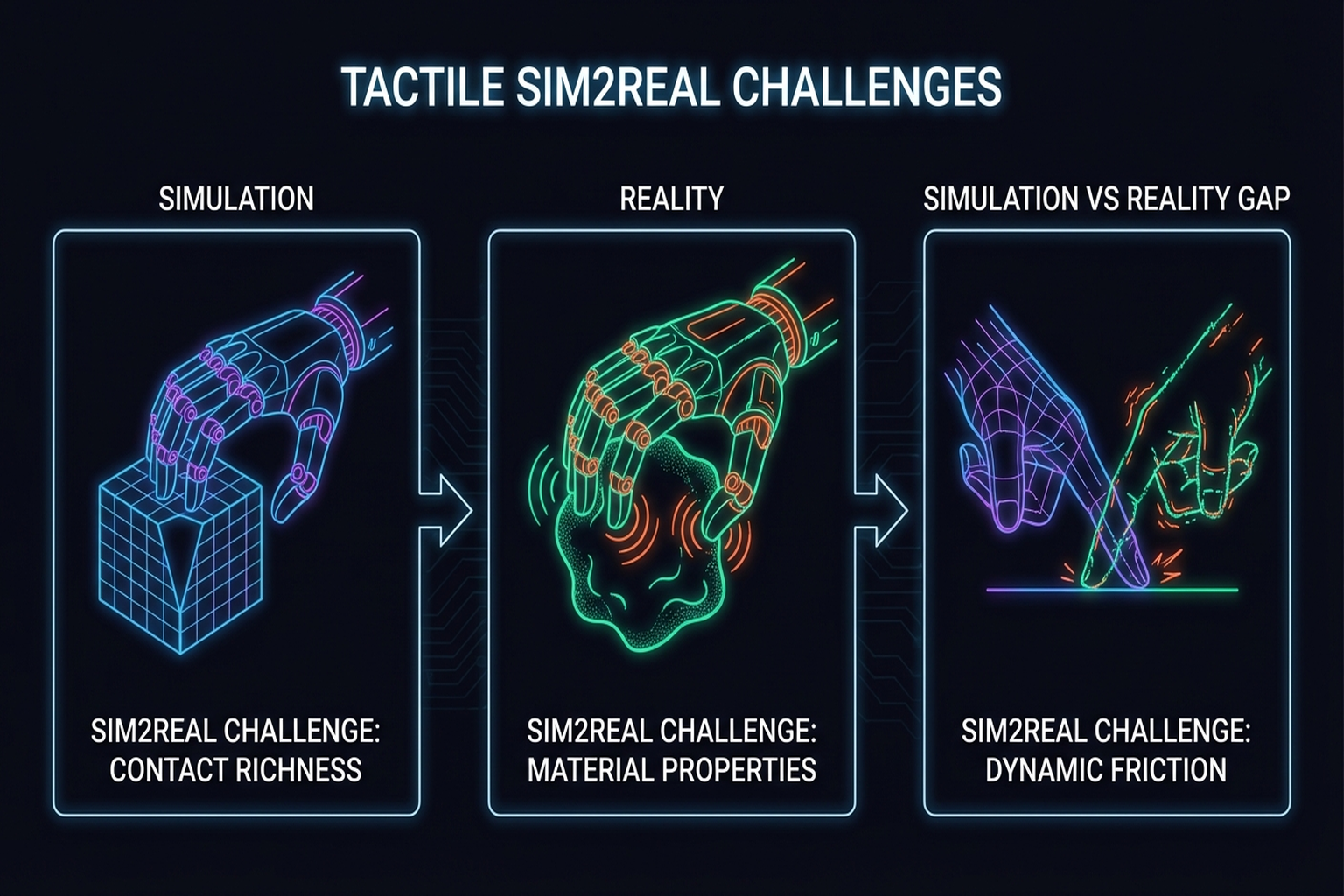

9.3 Tactile Sim-to-Real: Binary Tactile Skin [#13] Models and Zero-Shot Transfer

Tactile sim-to-real is fundamentally harder than visual sim-to-real: accurate gel deformation modeling is difficult; multi-physics coupling (optical + deformation + contact) is complex; sensor noise profiles differ between simulation and reality.

Yin et al.[4]'s binary 3-axis tactile skin model offers a practical solution:

- Simplification: Continuous force → binary contact (contact yes/no + 3-axis direction)

- 5,000 FPS simulation speed

- Zero-shot sim-to-real transfer

- 93% success on out-of-distribution objects

Key lesson: Simplified models with wide coverage can be more effective for sim-to-real transfer than precise but narrow tactile simulation.

Sim-to-Real RL for Humanoid Dexterous Manipulation[6] provides a practical recipe covering environment modeling, reward design, policy learning, and transfer.

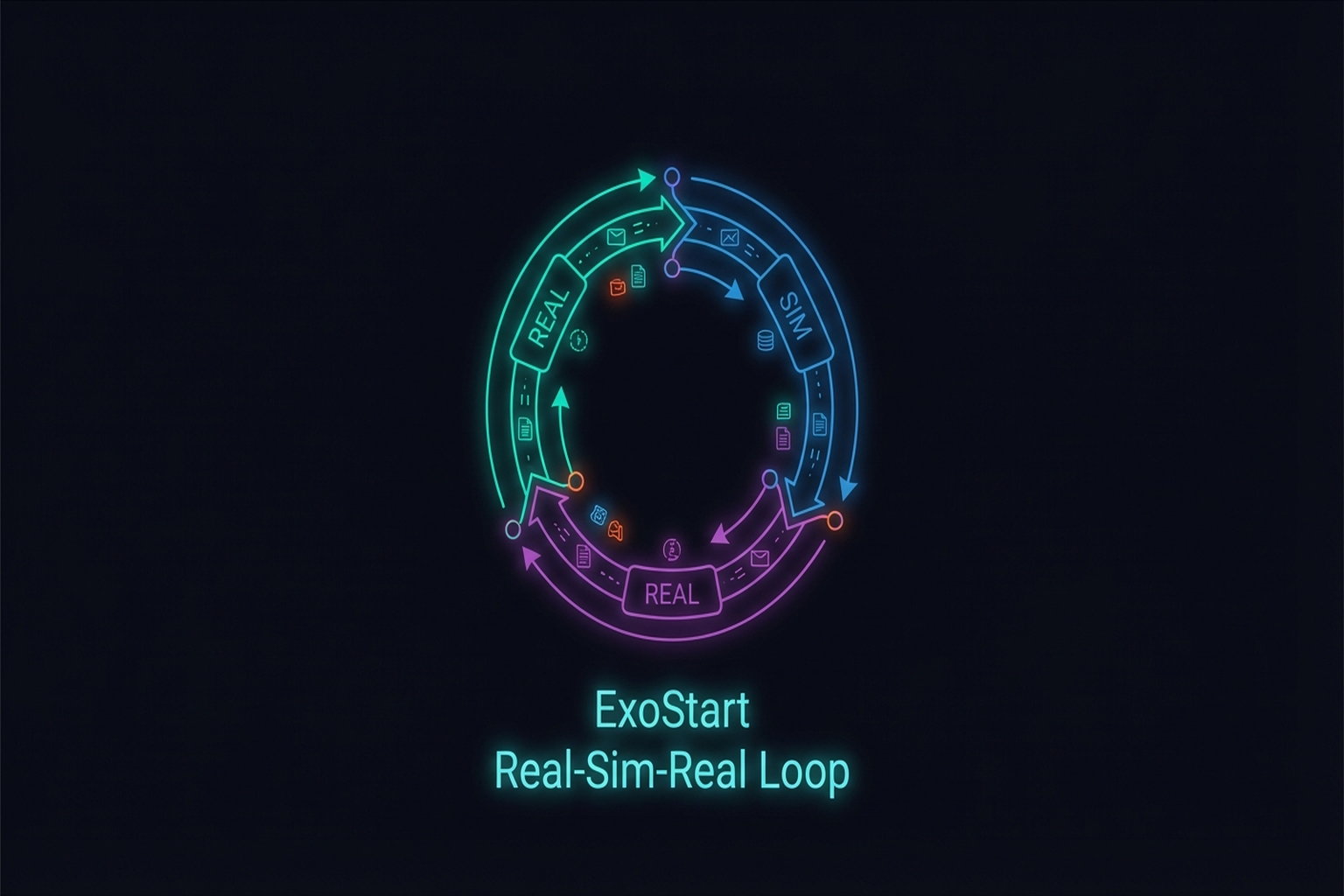

9.4 Real-Sim-Real Loops: RoboPaint [#15], X-Sim, ExoStart

Real-Sim-Real integrates real data into simulation before transferring back to reality.

RoboPaint (2025)

3D Gaussian Splatting (3DGS) reconstructs real scenes in simulation, increasing visual fidelity.

X-Sim (2025)

Dan et al.[6]'s Real-to-Sim-to-Real pipeline: real human data → simulation → policy learning → real transfer.

ExoStart (2025)

The most data-efficient Real-Sim-Real example:

- ~10 exoskeleton demos (real)

- MuJoCo dynamics filtering (sim)

- Auto-curriculum RL (sim)

- ACT vision student (distillation)

- Zero-shot real transfer → >50% on 6/7 tasks

Key Paper: Si, Z., et al. (2025). "ExoStart: From 10 Exoskeleton Demos to Dexterous Robot Manipulation." 10 exoskeleton demos → dynamics filtering → auto-curriculum RL → zero-shot real. The exemplar of data-efficient Real-Sim-Real.

DexWM (2025)

Meta FAIR's DexWM [arXiv Dec 2025] learns a world model from human videos:

- Combines 829 hours of human video + robot data to train a world model

- Learns policies directly from the world model without explicit simulation

- 83% real grasping success (zero-shot)

- Unlike conventional Real-Sim-Real, learns dynamics directly from data without an explicit simulation engine

- Sits between the co-training approaches (Chapter 10.6) and teleop-free approaches (Chapter 10.7)

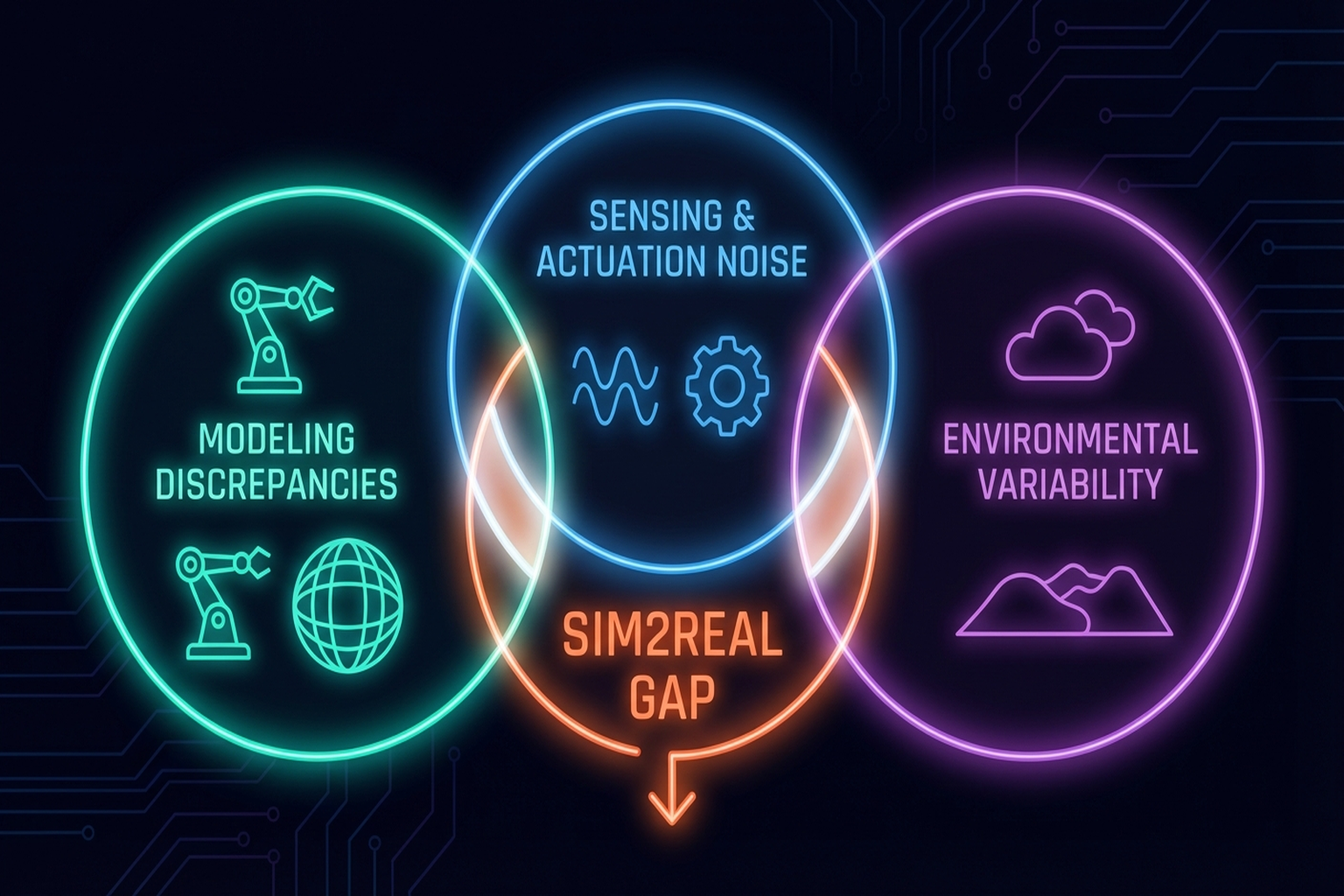

9.5 Analyzing the Sim-to-Real Gap: Dynamics, Perception, Contact Models

Three sources of the sim-to-real gap:

9.5.1 Dynamics Gap

Joint friction, stiction, contact dynamics, and deformable material behavior are difficult to model faithfully. ADR overcomes this through robustness, not precision.

9.5.2 Perception Gap

Differences in camera images, depth maps, and tactile images between simulation and reality. Omniverse Replicator and RoboPaint's 3DGS reduce this.

9.5.3 Contact Model Gap

The hardest aspect of tactile sim-to-real. FEM is precise but expensive; analytical models are too approximate. DiffTactile addresses this with differentiable contact models, but real-time performance remains insufficient.

Human-in-the-Loop RL [2025, Science Robotics] combines human intuition with autonomous policy optimization to achieve precise manipulation even when the sim-to-real gap is large.

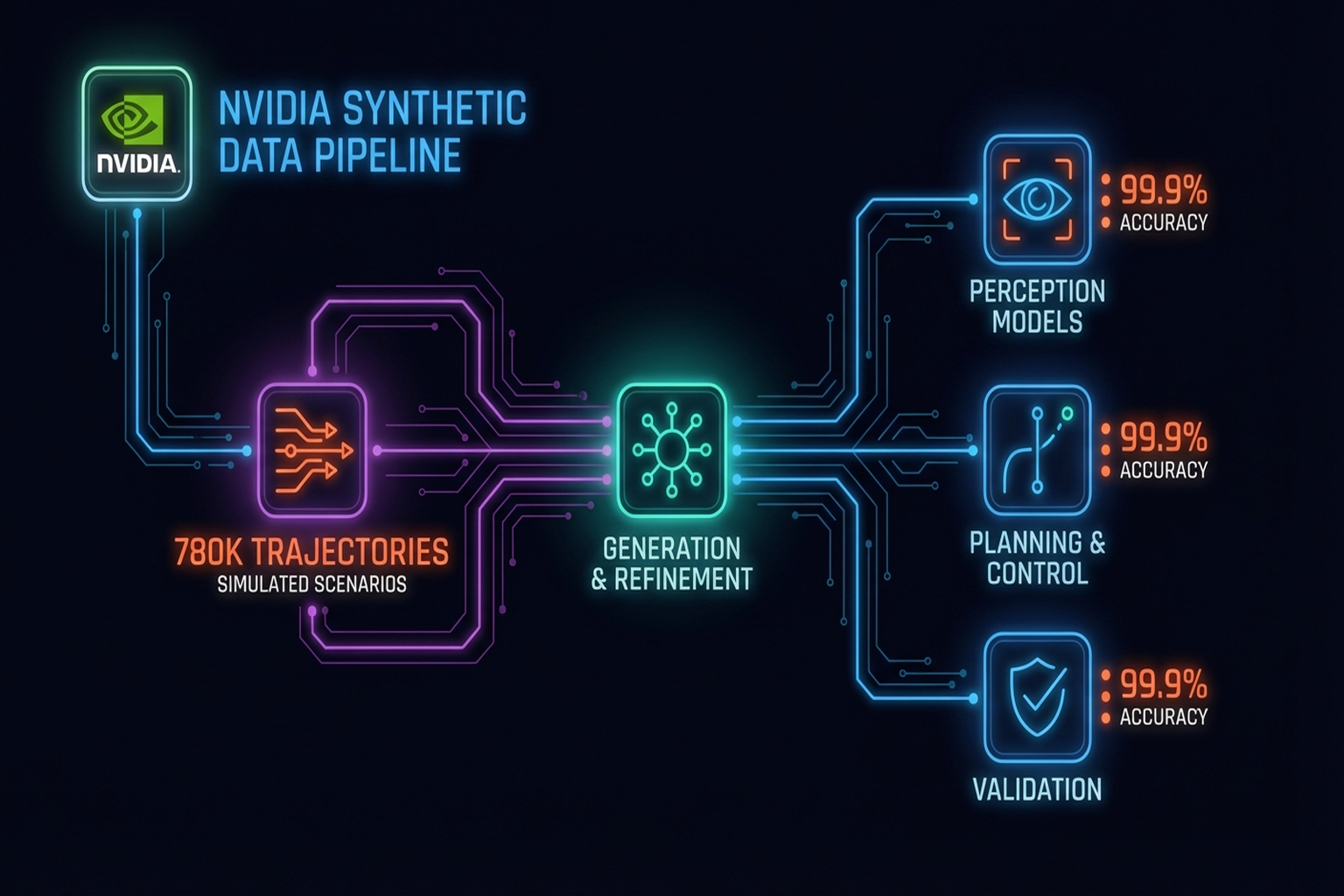

9.6 The Power and Limits of Synthetic Data

NVIDIA's synthetic data pipeline is currently the most powerful data generation approach:

- 780K trajectories (6,500 hours equivalent) → generated in 11 hours

- 40% improvement in real performance

- Isaac Sim + Omniverse Replicator

Limitations remain clear:

- Sim-to-real gap constrains synthetic data effectiveness

- Tactile synthetic data has a larger gap than visual

- Material property diversity is difficult to simulate

Summary and Outlook

Sim-to-real transfer is simultaneously one of the largest bottlenecks and fastest-advancing areas of tactile manipulation. DeXtreme's ADR, Yin et al.'s simplified tactile model, and ExoStart's data-efficient Real-Sim-Real represent the current frontier. NVIDIA's synthetic data addresses scale, but the tactile sim-to-real gap remains fundamentally larger than the visual gap, requiring advances along the DiffTactile/TacEx direction.

The next chapter examines Embodiment Retargeting — transferring skills from human to robot (→ Chapter 10).

References

- Handa, A., et al. (2023). DeXtreme: Transfer of agile in-hand manipulation from simulation to reality. ICRA 2023. arXiv:2210.13702. scholar

- Various. (2020). OpenAI Dactyl: Solving Rubik's Cube with a robot hand. IJRR. scholar

- Wang, S., Lambeta, M., et al. (2022). Tacto: A fast, flexible, and open-source simulator for vision-based tactile sensors. IEEE RA-L. scholar

- Si, Z., Zhang, G., Ben, Q., Romero, B., Xian, Z., Liu, C., & Gan, C. (2024). DiffTactile: A physics-based differentiable tactile simulator for contact-rich robotic manipulation. ICLR 2024. scholar

- Yin, Z.-H., et al. (2024). Learning in-hand translation using a binary 3-axis tactile skin. arXiv preprint. #13 scholar

- Si, Z., Qian, K., Sontakke, N., et al. (2025). ExoStart: Efficient learning for dexterous manipulation with sensorized exoskeleton demonstrations. arXiv preprint. #9 scholar

- Dan, Y., et al. (2025). X-Sim: Real-to-Sim-to-Real pipeline. scholar

- Various. (2025). RoboPaint: 3DGS for Real-Sim-Real visual transfer. #15 scholar

- Various. (2024). TacEx: GelSight simulation in Isaac Sim. scholar

- Various. (2025). Sim-to-real reinforcement learning for vision-based dexterous manipulation on humanoids. arXiv preprint. arXiv:2502.20396. scholar

- Various. (2025). Human-in-the-loop RL for precise dexterous manipulation. Science Robotics. https://doi.org/10.1126/scirobotics.ads5033. scholar

- NVIDIA. (2025). GR00T N1: An open foundation model for generalist humanoid robots. arXiv preprint. arXiv:2503.14734. scholar

- NVIDIA. (2026). Synthetic data pipeline: 780K trajectories in 11 hours. GTC 2026 Keynote. scholar

- Various. (2025). Tactile Robotics: Past and Future. arXiv:2512.01106. scholar

- Lipman, Y., et al. (2023). Flow matching for generative modeling. ICLR 2023. scholar

- Various. (2025). DexWM: Dexterous world models from human video. arXiv preprint. Meta FAIR. scholar