Chapter 11: Research Integration — Toward Unified Systems

Overview

The preceding ten chapters covered individual components — sensors, data, hands, learning, and transfer. This chapter examines how these components integrate into unified systems. We survey multi-modal fusion architectures, end-to-end system case studies, the open-source ecosystem, and benchmarks with standardization trends.

After reading this chapter, you will be able to... - Compare major visuo-tactile fusion architectures (early/late/MoE). - Explain the systemic significance of Mobile ALOHA, PP-Tac [#12], and the Seminar 3 integrated gripper. - Understand how open-source hardware/software/data accelerates research. - Assess the current state and need for benchmarks like RGMC.

11.1 Multi-Modal Fusion Architectures

Visuo-Tactile Fusion

Robot Synesthesia [1]: Point cloud-based visuo-tactile fusion with PointNet. Generalizes to novel objects (→ Chapter 3.1.4).

NeuralFeels [2]: Neural field-based visuotactile perception. Simultaneous pose and shape estimation inside the hand. Science Robotics (→ Chapter 1.3.4).

3D-ViTac [3]: Dense tactile (3mm²) + vision unified 3D representation. 85-90% bimanual success with Diffusion Policy vs. 45-50% vision-only (→ Chapter 2.4).

Force-Vision-Language Fusion

ForceVLA [12] [#1]: FVLMoE dynamic routing across 4 experts. +23.2pp, 90% under occlusion (→ Chapters 7.4, 8.4).

Tactile-VLA[5]: Unlocks VLA physical knowledge through tactile sensing (→ Chapter 8.4).

Representation Alignment

UniTouch [6]: Contrastive alignment of touch-vision-language-audio. Zero-shot classification (→ Chapter 3.6).

Sparsh [7]: 460K+ image self-supervised tactile foundation model (→ Chapter 3.6).

VTV-LLM[8]: Pre-contact physical property inference via visuo-tactile video + LLMs.

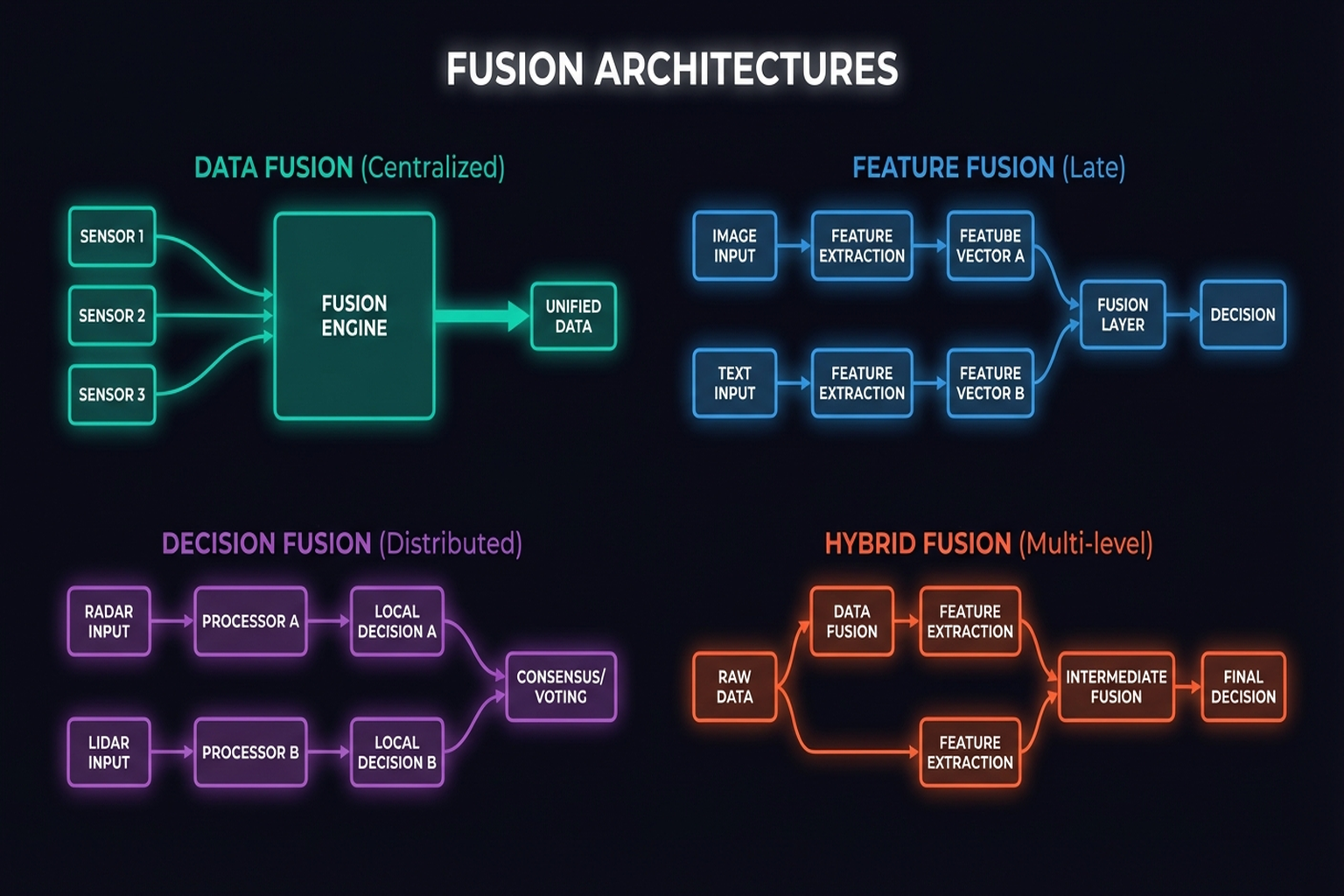

Fusion Architecture Comparison

| Architecture | Strengths | Weaknesses | Representative |

|---|---|---|---|

| Early fusion | Low-level feature combination | Cross-modal interference | 3D-ViTac |

| Late fusion | Independent modality learning | Limited cross-modal interaction | NeuralFeels |

| MoE (dynamic routing) | Task-optimal fusion | Training complexity | ForceVLA |

| Attention-based | Flexible weighting | Computation cost | Transformer VLAs |

11.2 End-to-End System Case Studies

Mobile ALOHA (2024)

Fu, Zhao, Finn [2024, Stanford]: Low-cost mobile bimanual system. ACT-based policy. ~200 citations. The most influential end-to-end research system.

TacEx (2024)

GelSight simulation integrated in Isaac Sim. Complete workflow: sensor sim → policy learning → sim-to-real in one platform.

PP-Tac (2025)

R-Tac + slip detection + Diffusion Policy → 87.5% thin object grasping. Integration of sensor + perception + control + learning for practical problem solving.

Seminar 3 Integrated Gripper

Underactuation + VSA + Active Belt for factory automation. Physical integration of mechanism (Chapter 5) + sensing + control.

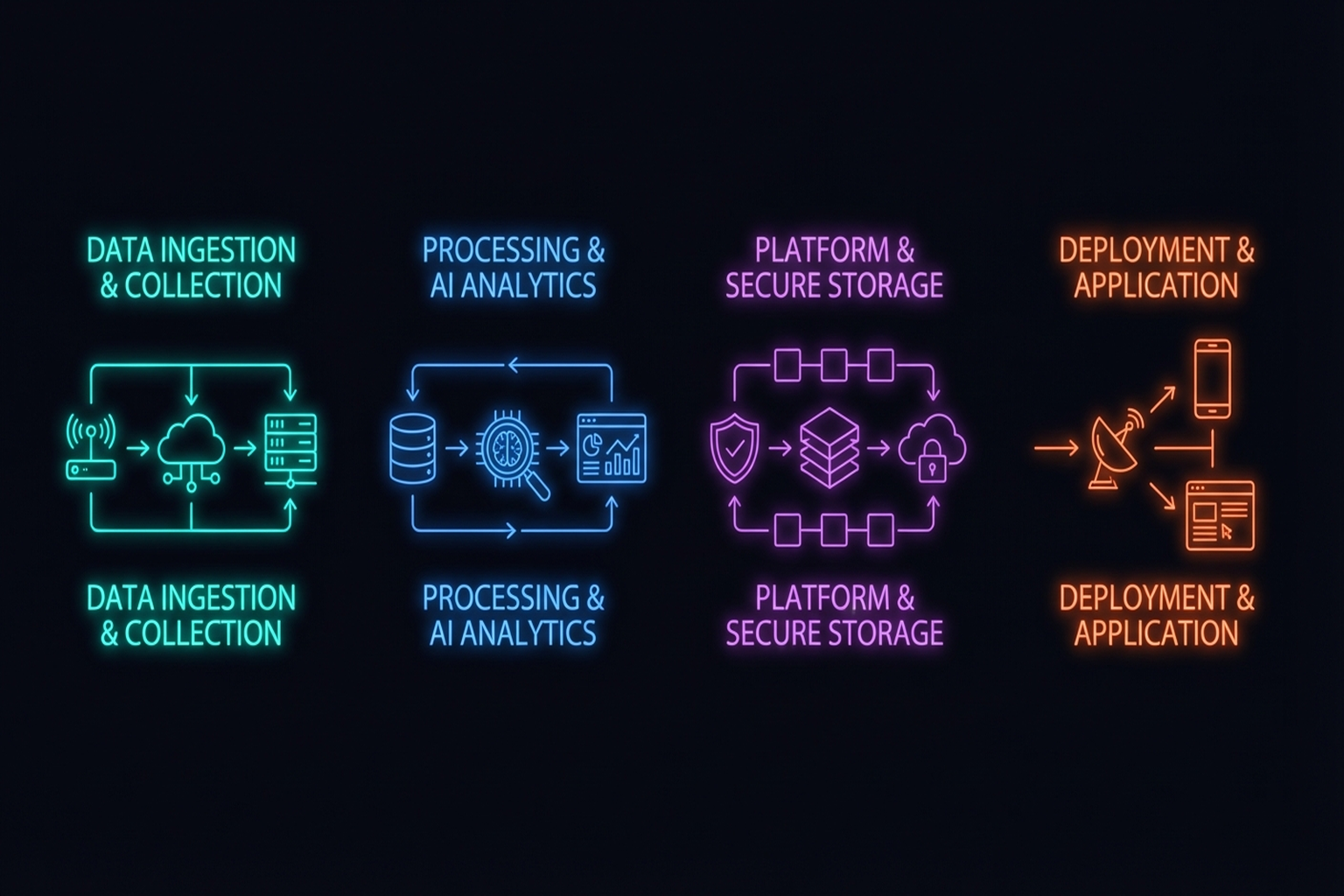

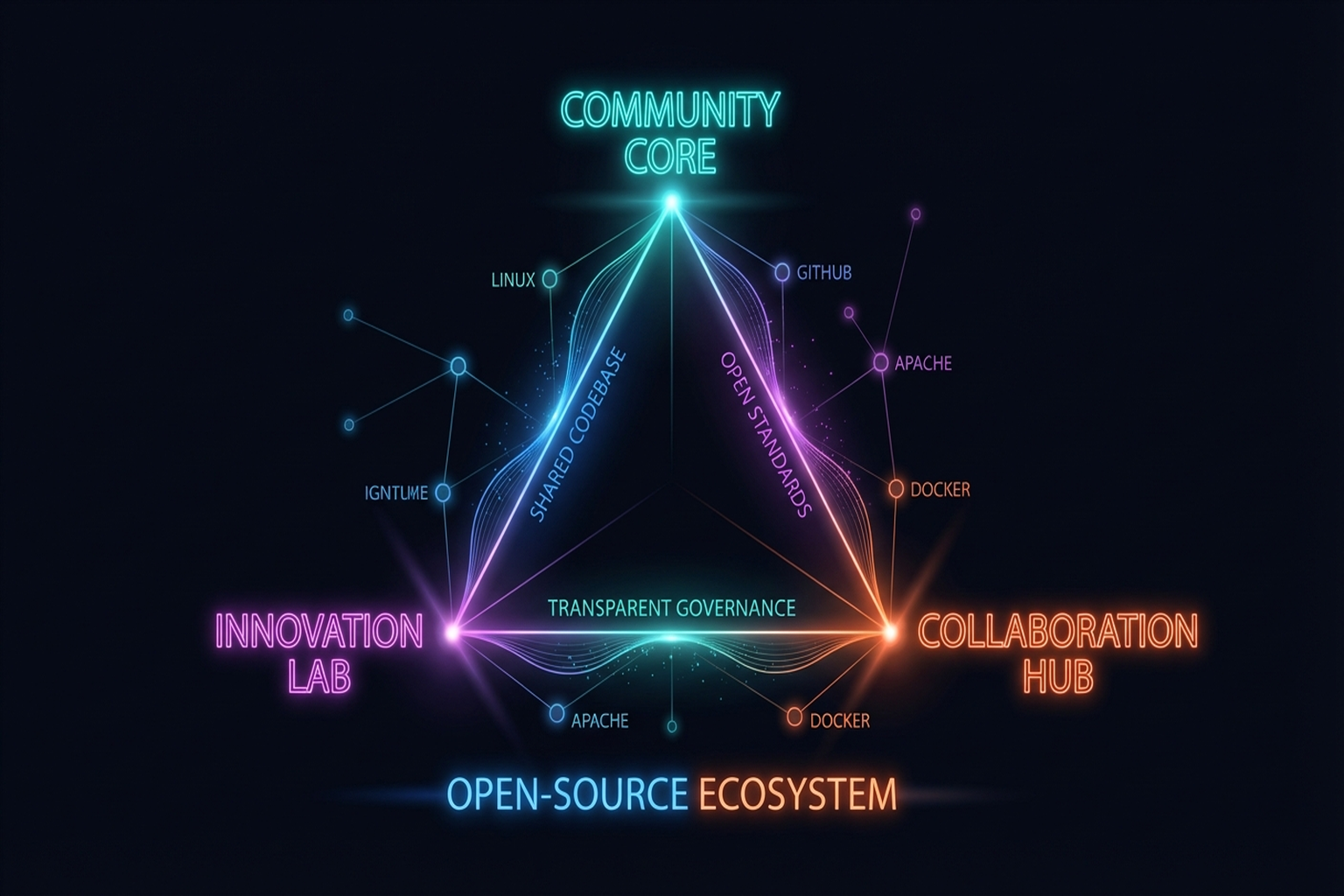

11.3 The Open-Source Ecosystem and Research Acceleration

Hardware

LEAP Hand ($2K), ORCA (17-DoF), ISyHand ($1.3K), OSMO glove

Software

OpenVLA (7B VLA), Octo, Diffusion Policy, ACT/ALOHA

Data

Open X-Embodiment (1M+ trajectories), Touch-and-Go (3M+ contacts), Touch100k, VTDexManip

The impact of open-source on reproducibility and research speed is revolutionary. Pre-2023, dexterous manipulation research required $16K-100K hardware; now it starts at $2K.

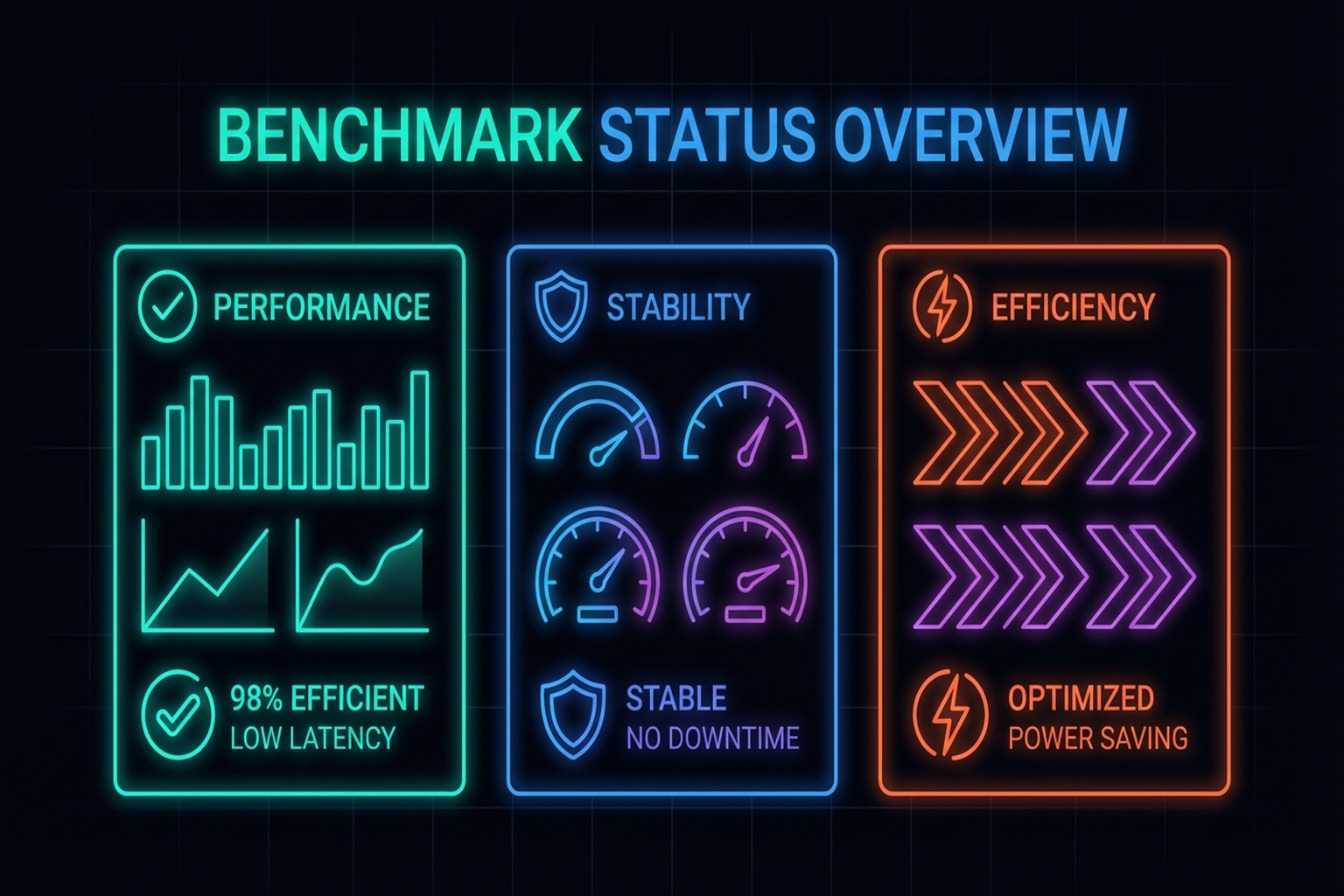

11.4 Benchmarks and Standardization

RGMC

ICRA's annual competition. 2025 Champion used optimization (not learning), demonstrating methodological diversity (→ Chapter 7.5).

Absent Tactile Sensor Benchmarks

Vision has ImageNet and COCO; tactile sensing has no established benchmark. This hinders sensor comparison and reproducibility.

Data Format Standardization

Albini et al.[12]'s 6 data structures (Chapter 3) are de facto standard candidates.

Cross-Embodiment Evaluation

Open X-Embodiment[14] enables consistent performance comparison across diverse robots.

Multimodal Tactile-Vision for Housekeeping [Nature Communications, 2024] demonstrates end-to-end multi-modal integration (pressure, temperature, texture, slip + vision) in household environments.

Summary and Outlook

System integration matters as much as individual component advances. ForceVLA's MoE fusion, Mobile ALOHA's low-cost bimanual system, PP-Tac's practical problem solving, and Seminar 3's mechanism integration each demonstrate that "the whole is greater than the sum of its parts." The open-source ecosystem accelerates integration, and establishing standardized benchmarks is the next challenge.

The next chapter examines how research achievements translate into industry — Physical AI and Industry Outlook (→ Chapter 12).

References

- Yuan, Y., et al. (2024). Robot Synesthesia. ICRA 2024. scholar

- Suresh, S., et al. (2024). NeuralFeels. Science Robotics, 9(86). scholar

- Huang, B., et al. (2024). 3D-ViTac. CoRL 2024. scholar

- Yu, J., et al. (2025). ForceVLA: Enhancing VLA models with a force-aware MoE for contact-rich manipulation. NeurIPS 2025. #1 scholar

- Various. (2025). Tactile-VLA. OpenReview. scholar

- Yang, F., et al. (2024). UniTouch. CVPR 2024. scholar

- Higuera, C., et al. (2024). Sparsh. CoRL 2024. scholar

- Liu, K., et al. (2025). VTV-LLM: Robotic perception with a large tactile-vision-language model. arXiv preprint. arXiv:2506.19303. scholar

- Fu, Z., Zhao, T. Z., & Finn, C. (2024). Mobile ALOHA: Learning bimanual mobile manipulation with low-cost whole-body teleoperation. arXiv preprint. arXiv:2401.02117. scholar

- Various. (2024). TacEx: GelSight tactile simulation in Isaac Sim. arXiv preprint. arXiv:2411.04776. scholar

- Various. (2025). PP-Tac. RSS 2025. #12 scholar

- Yu, M., et al. (2025). RGMC Champion. IEEE RA-L. scholar

- Albini, A., et al. (2025). Tactile data representation review. arXiv (IEEE T-RO). scholar

- Open X-Embodiment Collaboration. (2024). ICRA 2024. scholar

- Mao, Q., Liao, Z., Yuan, J., & Zhu, R. (2024). Multimodal tactile sensing fused with vision for dexterous robotic housekeeping. Nature Communications, 15, 6871. https://doi.org/10.1038/s41467-024-51261-5 scholar

- Various. (2025). Simultaneous tactile-visual perception for learning multimodal robot manipulation. arXiv preprint. arXiv:2512.09851. scholar

- Various. (2025). Multimodal fusion and vision-language models: A survey for robot vision. Information Fusion (Elsevier). arXiv:2504.02477. scholar

- Various. (2025). Tactile Robotics: An outlook. arXiv preprint. arXiv:2508.11261. scholar