Chapter 1: Why Tactile Sensing — Giving Robots the Sense of Touch

Overview

Humans can find keys in a pocket, peel a single page from a stack, and transfer an egg without cracking it — all without looking. Underlying each of these acts is tactile sensing, a modality that most robots today entirely lack. This chapter argues why touch is indispensable for robotic manipulation, traces the historical arc of tactile robotics, catalogs failure modes that arise without it, and lays out the roadmap for the rest of the book.

After reading this chapter, you will be able to... - Describe how biological touch works and how it differs from current robotic tactile sensing. - Identify concrete manipulation scenarios where the absence of touch leads to failure. - Trace the four generations of tactile robotics research. - Navigate the structure and scope of this book.

1.1 The Biology of Touch

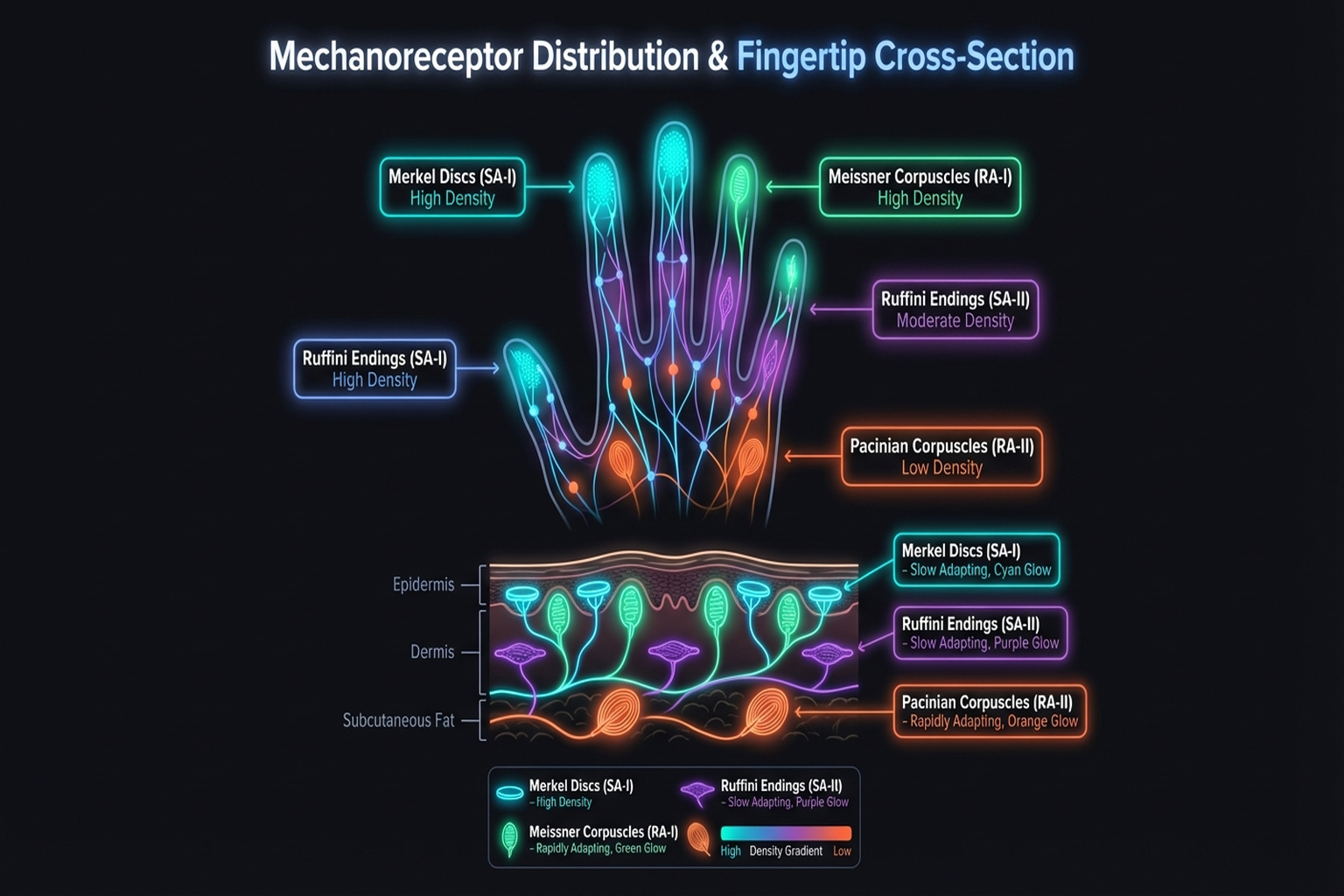

The human hand contains roughly 17,000 mechanoreceptors that detect contact, pressure, vibration, and temperature changes [1]. Receptor density at the fingertip reaches approximately 240 units/cm², enabling discrimination of surface features as fine as 13 μm [2].

The four receptor types and their robotic analogs are summarized below:

| Receptor Type | Stimulus | Adaptation | Robotic Counterpart |

|---|---|---|---|

| Merkel disc | Pressure, shape | Slow (SA I) | Piezoresistive |

| Meissner corpuscle | Light touch, slip | Fast (RA I) | Capacitive |

| Ruffini ending | Skin stretch | Slow (SA II) | Strain gauge |

| Pacinian corpuscle | Vibration (40-400 Hz) | Fast (RA II) | Piezoelectric |

Key Paper: Johansson, R. S. & Flanagan, J. R. (2009). "Coding and Use of Tactile Signals from the Fingertips in Object Manipulation Tasks." Nature Reviews Neuroscience, 10, 345-359. A foundational neuroscience study revealing how fingertip tactile signals are encoded and exploited during object manipulation.

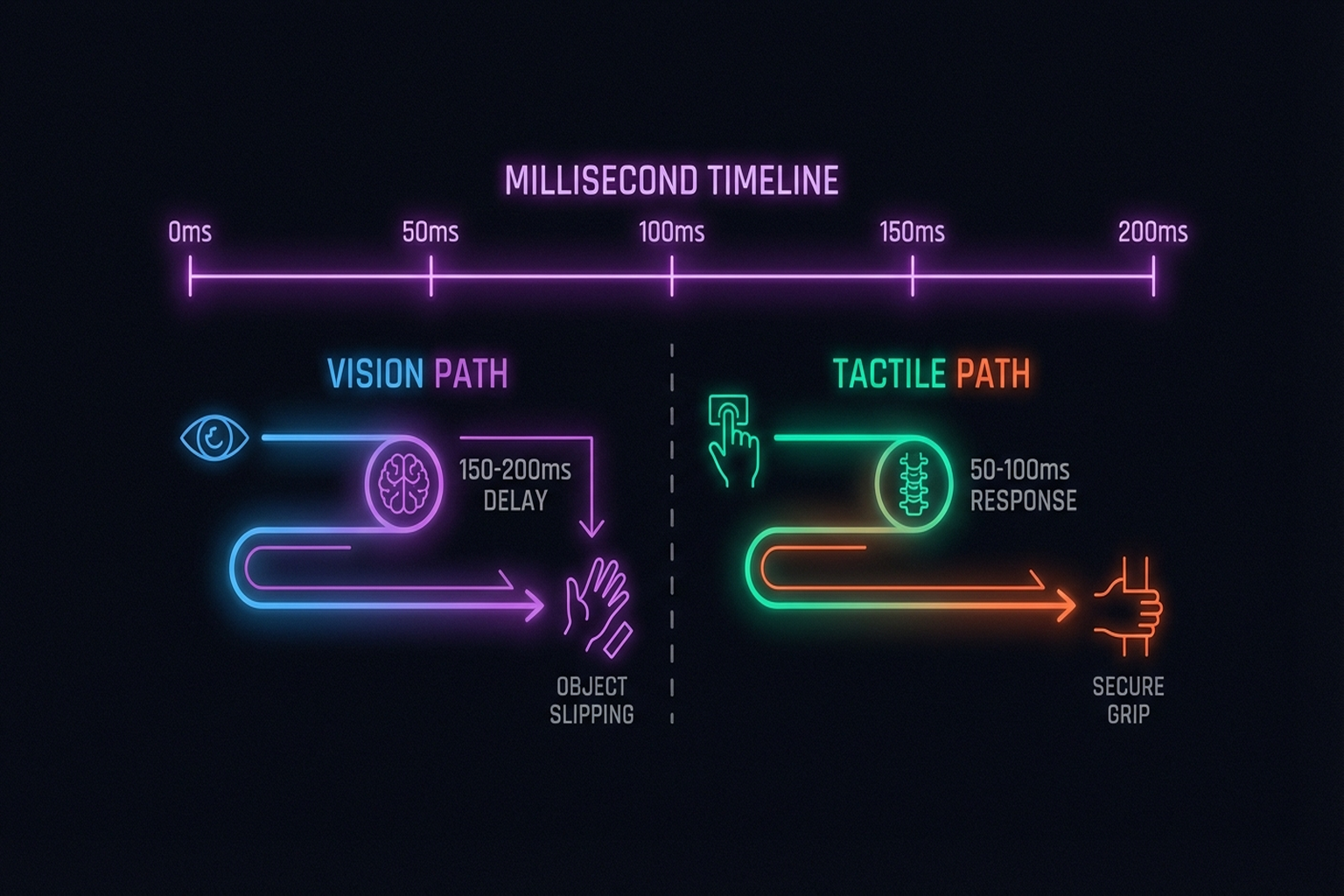

When grasping an object, humans detect slip within approximately 100 ms and adjust grip force accordingly. Without this feedback loop, we would crush paper cups and drop eggs. The same principle applies to robots: safe, dexterous manipulation in contact-rich environments demands tactile feedback.

1.2 A Brief History of Tactile Robotics

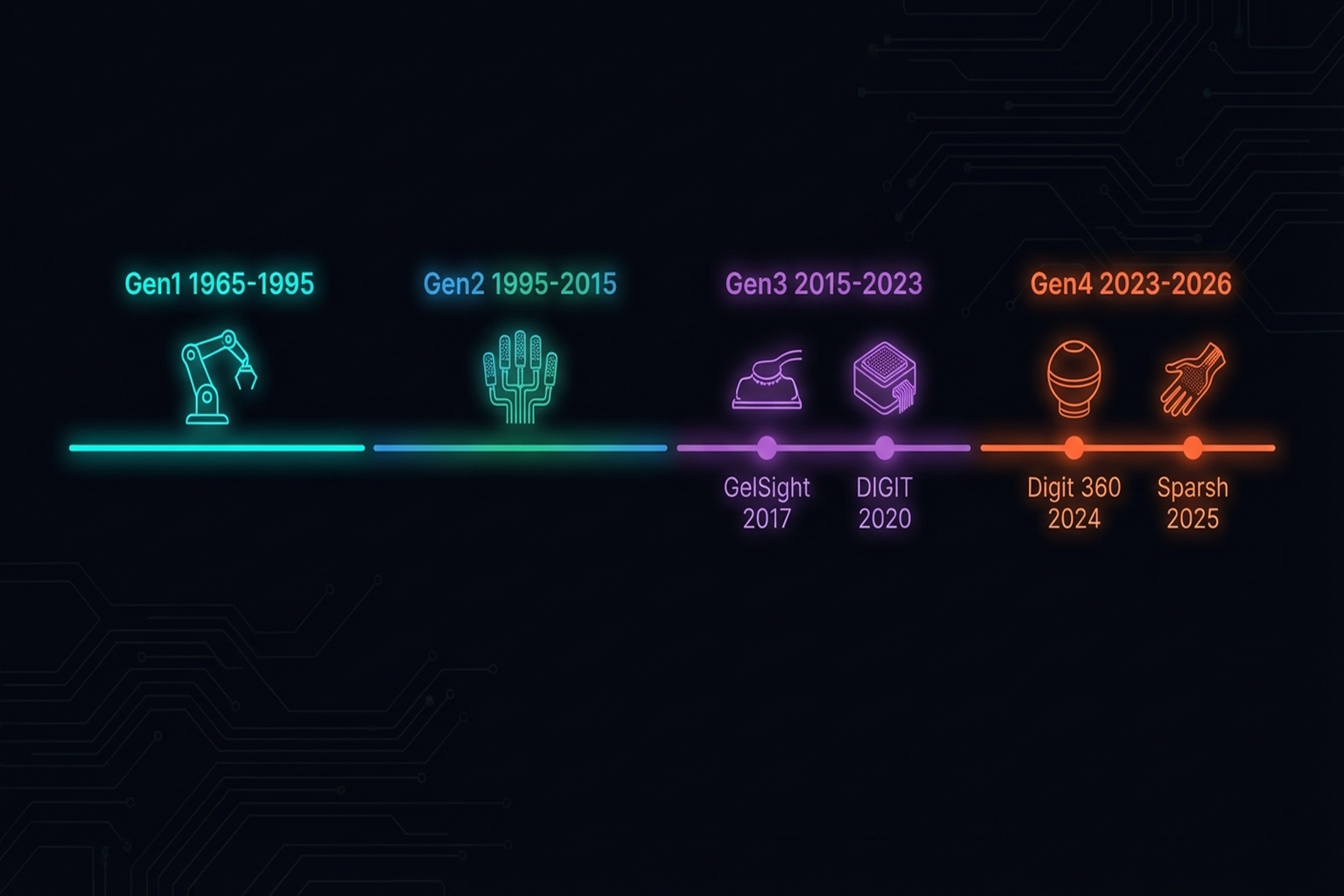

The history of tactile robotics stretches back to the 1960s. The survey "Tactile Robotics: Past and Future" [2025] organizes this history into four generations:

Generation 1 — Origins (1965-1980s): Early tactile sensors provided simple binary contact detection. Force-torque sensors began appearing on industrial robot arms, but fingertip-level sensing remained a distant goal.

Generation 2 — Growth (1980s-2000s): As documented in the landmark survey by Dahiya et al. [3], this era explored diverse transduction methods — piezoresistive, capacitive, piezoelectric, and others. Dexterous hand prototypes such as the Shadow Hand (1990s) appeared, but sensor durability and cost remained formidable barriers to practical deployment.

Key Paper: Dahiya, R. S., Metta, G., Valle, M., & Sandini, G. (2010). "Tactile Sensing: From Humans to Humanoids." IEEE Transactions on Robotics, 26(1), 1-20. The de facto starting reference for tactile robotics research, covering transduction methods, data processing, and system-level integration.

Generation 3 — The Tactile Winter (2000-2015): The explosive progress of computer vision (deep learning, CNNs) drew the research community's attention toward vision-based robotics, and tactile research entered a relative plateau. Nonetheless, high-performance sensors such as BioTac ($5K-10K) kept foundational research alive.

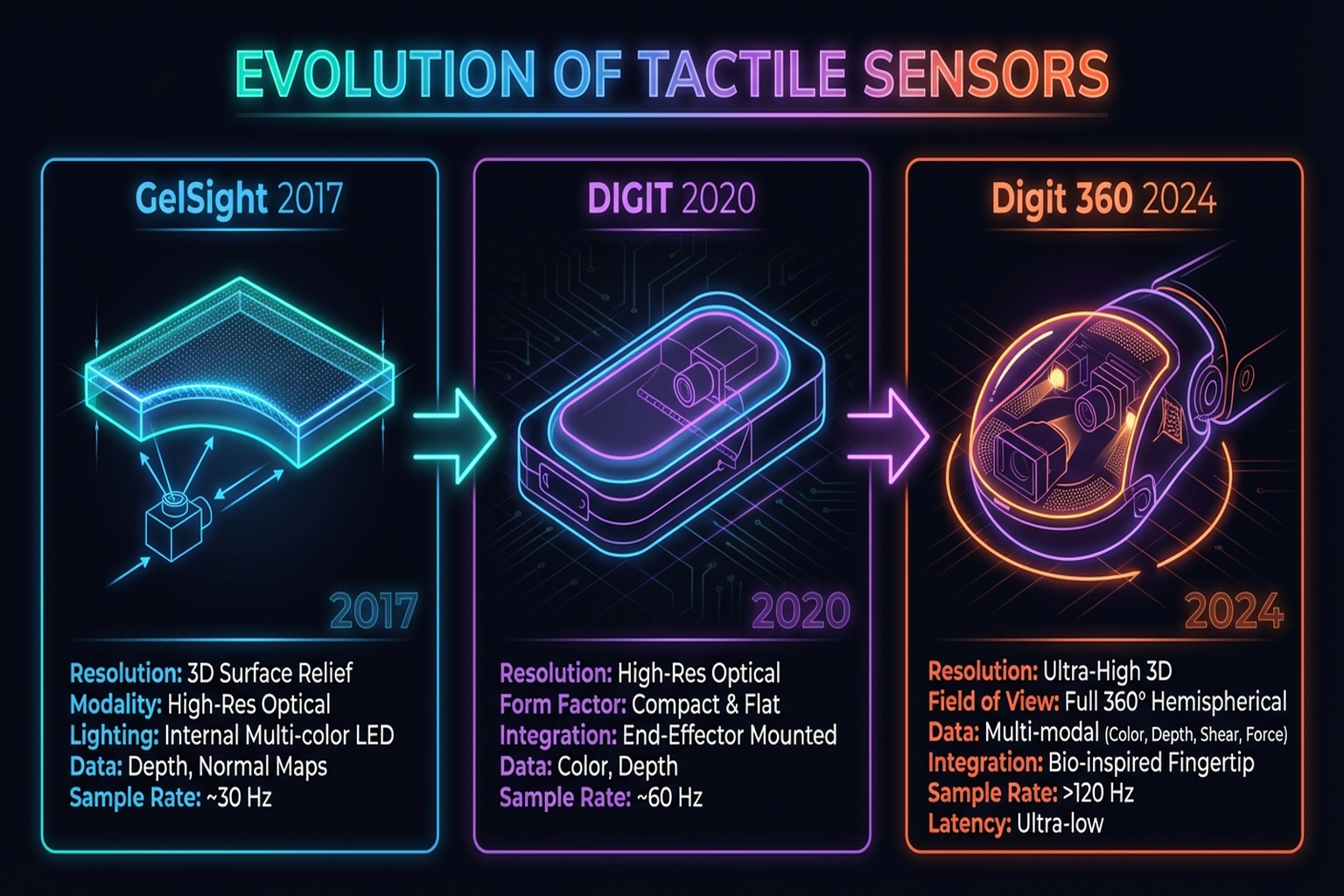

Generation 4 — Renaissance (2015-present): The introduction of GelSight [4] marked a turning point. Vision-based optical tactile sensors delivered dramatically higher resolution at far lower cost — DIGIT [5] brought the price to roughly $350. By 2024, Digit 360 offered 8.3 million taxels and 18+ sensing modalities in a single multimodal artificial fingertip [6], prompting claims that the "ImageNet moment" for touch has arrived.

Another catalyst for this renaissance has been the democratization of low-cost robot hands. LEAP Hand [7] introduced a 3D-printable anthropomorphic hand at $2,000, making dexterous manipulation research accessible without the $16,000 Allegro Hand or the $100,000+ Shadow Hand (→ Chapter 4).

1.3 When Touch Is Missing: Failure Modes

Numerous manipulation scenarios resist solution by vision alone. The following are representative cases where the absence of tactile feedback is a direct cause of failure.

1.3.1 Grasp Failure

When grasping objects, visual information alone is insufficient to determine appropriate grip force. A Styrofoam cup and a ceramic mug may look similar to a camera, yet the required gripping forces differ by an order of magnitude. The F-TAC Hand[16] covered 70% of the hand surface with 17 tactile sensors and achieved 100% success in multi-object grasping — a direct demonstration of the power of tactile feedback (→ Chapter 2.4).

1.3.2 Slip Detection

Detecting incipient slip requires real-time monitoring of shear force changes. Universal Slip Detection[17] achieved 97% accuracy with 1.26 ms latency using tactile feedback. Cameras can only observe slip after it has occurred; tactile sensors detect it as it begins.

1.3.3 Deformable Objects

Manipulating cloth, cables, and food is inherently difficult with vision alone. Estimating stiffness, friction coefficients, and current deformation state requires contact information. PP-Tac[18] [#12] combined an R-Tac tactile sensor with slip detection in a Diffusion Policy framework, achieving 87.5% success on thin-object grasping — including paper and cards (→ Chapter 7.3).

1.3.4 Occlusion

During in-hand rotation, the robot's own fingers occlude most of the object. NeuralFeels [12] addressed this through neural field-based visuotactile SLAM, simultaneously estimating object pose and shape inside the hand (Science Robotics, 2024) — a task impossible with vision alone (→ Chapter 11.1).

1.4 What Touch Enables

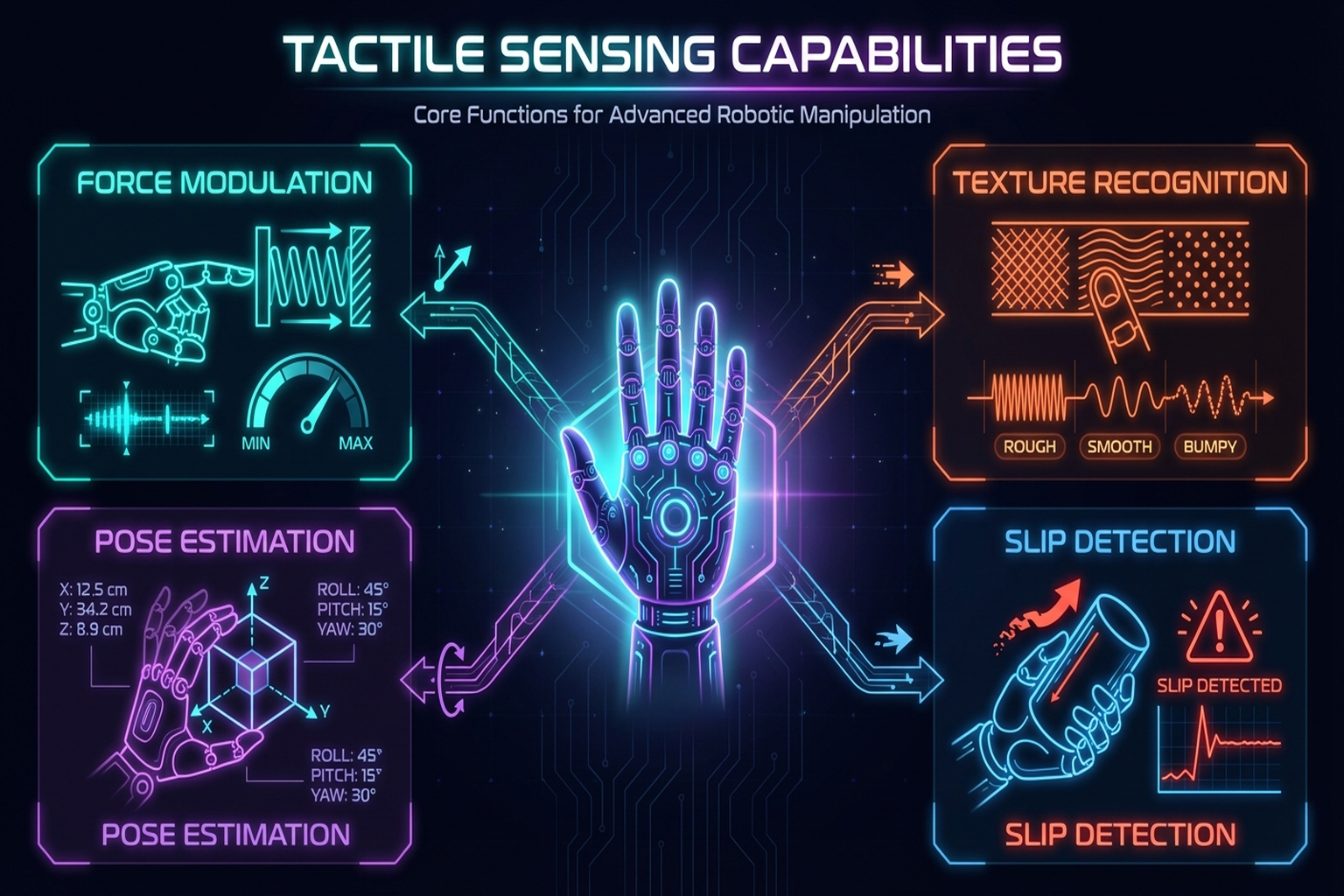

Tactile sensing provides four core capabilities for robotic manipulation:

1.4.1 Force Modulation

Tactile feedback enables real-time adjustment of grip force. Hogan [8] established the theoretical foundations of impedance control — governing the relationship between force and displacement during robot-environment interaction — which remains the bedrock of tactile-aware force control. DexForce[21] [#3] demonstrated kinesthetic teaching that simultaneously records force-torque information through a spring model (→ Chapter 7.4).

1.4.2 Slip Detection and Response

By detecting changes in shear force, robots can increase grip force before an object slips. As discussed in Seminar 2, multi-axis sensing is essential because normal force alone cannot predict shear-direction slip [19].

1.4.3 Pose Estimation

The distribution of contact points and force vectors enables estimation of an object's pose within the hand. Tactile-only in-hand rotation [10] demonstrated continuous object rotation using only binary tactile sensors without vision — though as noted in Seminar 1, tactile-only manipulation is currently limited to rotation tasks, because touch provides only local, post-contact information.

1.4.4 Texture Recognition

Tactile recognition of surface microstructure provides material property information that vision cannot easily capture. GelSight [4] reconstructs 3D contact surfaces at micrometer resolution via photometric stereo, while UniTouch [13] aligns touch with vision, language, and audio through contrastive learning, enabling zero-shot texture classification (→ Chapter 3.6).

1.5 Industrial and Everyday Applications

Tactile-based robotic manipulation is expanding beyond the laboratory into industrial settings. Markets and Markets projects the humanoid robotics market to grow from $2.9 billion in 2025 to $15.3 billion by 2030 (CAGR 39.2%). Goldman Sachs forecasts 250,000+ unit shipments by 2030; Morgan Stanley envisions a $5 trillion market by 2050.

1.5.1 Manufacturing — The First Beachhead

Factory deployment of humanoid robots is currently led by the automotive sector:

| Company | Customer | Task | Status |

|---|---|---|---|

| Figure AI | BMW Spartanburg | Sheet metal handling | Pilot completed (30K cars) |

| Agility (Digit) | Amazon/GXO | Tote recycling | Active deployment (100K+ totes) |

| Boston Dynamics | Hyundai | Factory operations | Deploying |

| Apptronik | Mercedes Berlin | Intra-logistics | Pilot in progress |

| Sanctuary AI | Magna | Parts sorting | Pilot in progress |

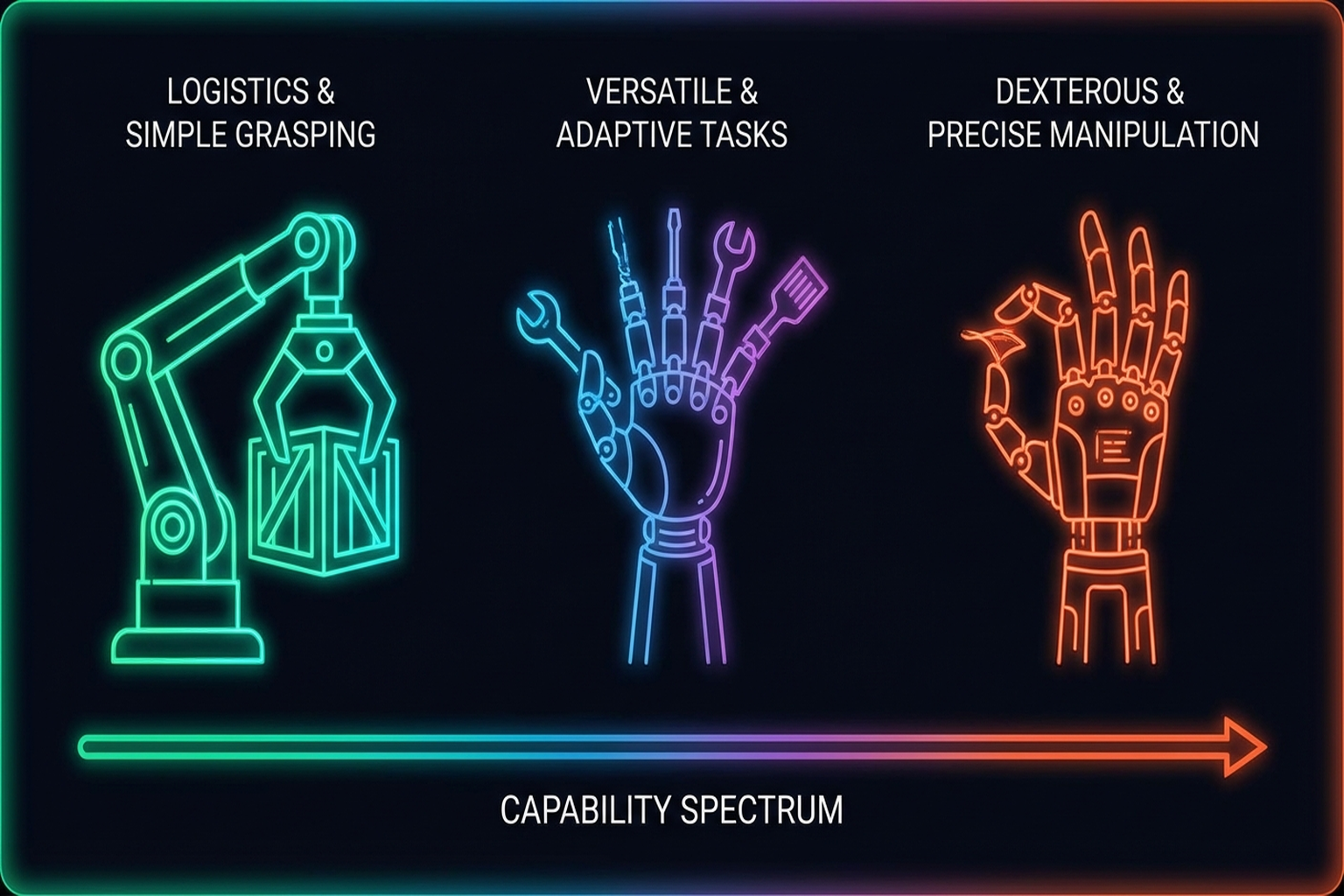

However, as Billard & Kragic [9] observed, current factory deployments remain at the logistics (pick-move-place) level. Dexterous assembly — the domain where tactile feedback is essential — has not yet reached production floors. Bridging this gap is a central challenge for tactile robotics over the next five years (→ Chapters 12, 13).

1.5.2 The Korean Ecosystem

South Korea has established a distinctive ecosystem in tactile robotics:

- Hyundai: Owns Boston Dynamics subsidiary; KRW 125.2 trillion investment; Electric Atlas factory deployment.

- Samsung: 35% stake in Rainbow Robotics; CEO-level Future Robotics Office (led by Prof. Oh Jun-ho, KAIST).

- Wonik Robotics: Allegro Hand ($16K) — the de facto research standard; pursuing Digit Plexus integration with Meta FAIR.

- KAIST/ETRI: Next-generation sensors and materials research.

This vertical integration — hardware (Hyundai-BD, Samsung-Rainbow) + sensors (Wonik-Meta) + fundamental research (KAIST/ETRI) — is rare even from a global perspective (→ Chapter 12.2).

1.5.3 Everyday Applications

The 1X NEO ($20K consumer humanoid) began home deployment in 2026, making tactile manipulation in everyday environments a reality. Turning door handles, opening drawers, transferring dishes — human environments are designed for hands, which is the fundamental reason why general-purpose robots require dexterous manipulation capabilities.

1.6 Book Organization and Roadmap

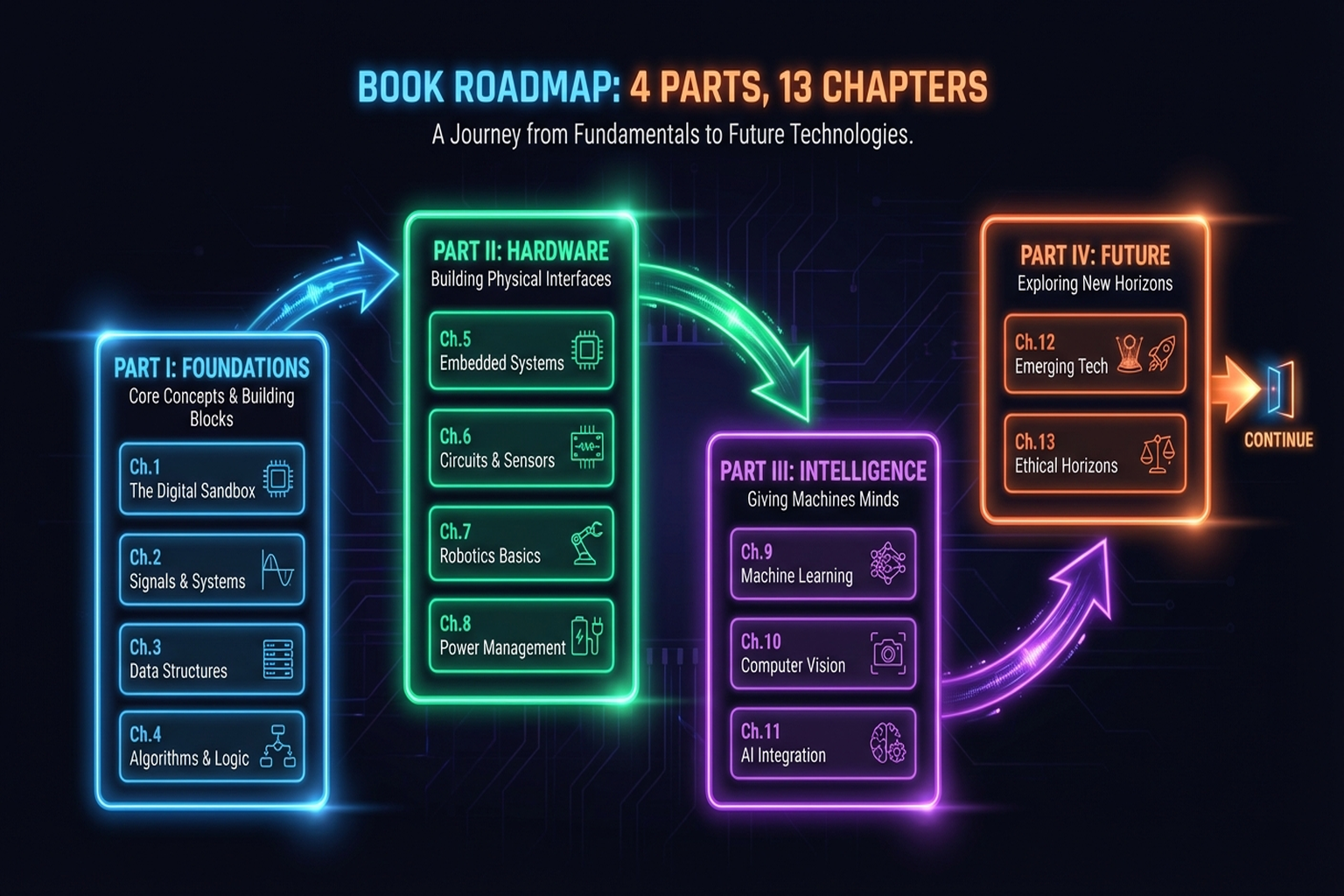

This book comprises four parts and thirteen chapters, painting the full picture of tactile-based robotic manipulation from foundations to applications.

Part I — Foundations of Touch (Chapters 1-3): Beginning with the motivation and biological foundations of touch (this chapter), we cover the physics of sensor technology (Chapter 2) and the representation and collection of tactile data (Chapter 3). These three chapters establish the vocabulary and concepts for all subsequent discussion.

Part II — Hands: Robot and Human (Chapters 4-6): We examine robot hand design principles (Chapter 4) and the physical intelligence of smart mechanisms (Chapter 5), then introduce diverse approaches for collecting human hand data (Chapter 6). Here, "how machines grasp" meets "how humans teach."

Part III — Learning and Transfer (Chapters 7-10): Starting with tactile manipulation learning (Chapter 7), we advance to the frontier of VLA models (Chapter 8), simulation-to-reality transfer (Chapter 9), and human-to-robot skill transfer (Chapter 10). This part encompasses the largest body of recent research and reflects the most active areas of the current community.

Part IV — Integration and Outlook (Chapters 11-13): We conclude with multi-modal system integration (Chapter 11), Physical AI and industry outlook (Chapter 12), and common limitations with future research directions (Chapter 13). Chapter 13 in particular proposes a research direction centered on the mechanism-tactile-learning triangle.

Each chapter is designed to be readable independently, though sequential reading provides the most natural flow. Every chapter includes Key Paper boxes, Figure placeholders, cross-references, and an APA-formatted reference list.

Summary and Outlook

Tactile sensing is the "last puzzle piece" of robotic manipulation. Three converging trends — the cost reduction of vision-based sensors, the democratization of open-source hands, and the emergence of large-scale foundation models — are driving tactile robotics forward at an unprecedented pace. As of 2026, Sanctuary AI has integrated 5 mN sensitivity tactile sensing into a commercial humanoid, NVIDIA's synthetic data pipeline generates 780K trajectories in 11 hours, and pi0/RECAP demonstrates continuous improvement through post-deployment reinforcement learning. Touch is no longer optional — it is becoming standard.

The next chapter enters the world of the sensor technologies that physically realize this sense of touch (→ Chapter 2: Tactile Sensor Technology).

References

- Johansson, R. S., & Flanagan, J. R. (2009). Coding and use of tactile signals from the fingertips in object manipulation tasks. Nature Reviews Neuroscience, 10, 345-359. https://doi.org/10.1038/nrn2621 scholar

- Kandel, E. R., Schwartz, J. H., & Jessell, T. M. (2000). Principles of Neural Science (4th ed.). McGraw-Hill. scholar

- Dahiya, R. S., Metta, G., Valle, M., & Sandini, G. (2010). Tactile sensing: From humans to humanoids. IEEE Transactions on Robotics, 26(1), 1-20. https://doi.org/10.1109/TRO.2009.2033627 scholar

- Yuan, W., Dong, S., & Adelson, E. H. (2017). GelSight: High-resolution robot tactile sensors for estimating geometry and force. Sensors, 17(12), 2762. https://doi.org/10.3390/s17122762 scholar

- Lambeta, M., Chou, P.-W., Tian, S., Yang, B., Maloon, B., Most, V. R., ... & Calandra, R. (2020). DIGIT: A novel design for a low-cost compact high-resolution tactile sensor with application to in-hand manipulation. IEEE Robotics and Automation Letters, 5(3), 3838-3845. https://arxiv.org/abs/2005.14679 scholar

- Lambeta, M., Wu, T., Sengul, A., & Calandra, R. (2024). Digitizing touch with an artificial multimodal fingertip (Digit 360). arXiv preprint, arXiv:2411.02834. scholar

- Shaw, K., Agarwal, A., & Pathak, D. (2023). LEAP Hand: Low-cost, efficient, and anthropomorphic hand for robot learning. Robotics: Science and Systems (RSS). scholar

- Hogan, N. (1985). Impedance control: An approach to manipulation. Journal of Dynamic Systems, Measurement, and Control, 107(1), 1-24. https://doi.org/10.1115/1.3140702 scholar

- Billard, A., & Kragic, D. (2019). Trends and challenges in robot manipulation. Science, 364(6446), eaat8414. scholar

- Yin, Z.-H., Huang, B., Qin, Y., Chen, Q., & Wang, X. (2023). Rotating without seeing: Towards in-hand dexterity through touch. Robotics: Science and Systems (RSS). scholar

- Pitz, J., Röstel, L., Sievers, L., & Bäuml, B. (2024). Dextrous tactile in-hand manipulation using a modular reinforcement learning architecture. IEEE-RAS International Conference on Humanoid Robots. scholar

- Suresh, S., Si, Z., Anderson, S., Kaess, M., & Mukadam, M. (2024). NeuralFeels: Neural fields for visuotactile perception. Science Robotics, 9(86). scholar

- Yang, F., Feng, C., Chen, Z., Park, H., Wang, D., Dou, Y., ... & Wong, A. (2024). Binding touch to everything: Learning unified multimodal tactile representations. CVPR 2024. scholar

- Lepora, N. F. (2025). Tactile Robotics: Past and Future. arXiv preprint, arXiv:2512.01106. scholar

- Various. (2025). Recent advances in tactile sensing technologies for human-robot interaction: Current trends and future perspectives. Sensors International. https://doi.org/10.1016/j.sintl.2025.100345 scholar

- Zhao, Z., Li, W., Li, Y., Liu, T., Li, B., Wang, M., Du, K., Liu, H., Zhu, Y., Wang, Q., Althoefer, K., & Zhu, S.-C. (2025). Embedding high-resolution touch across robotic hands enables adaptive human-like grasping. Nature Machine Intelligence. https://doi.org/10.1038/s42256-025-01053-3 #39 scholar

- Zhao, C., Yu, Y., Ye, Z., Tian, Z., Zhang, Y., & Zeng, L.-L. (2025). Universal slip detection of robotic hand with tactile sensing. Frontiers in Neurorobotics, 19. https://doi.org/10.3389/fnbot.2025.1478758 scholar

- Lin, P., Huang, Y., Li, W., Ma, J., Xiao, C., & Jiao, Z. (2025). PP-Tac: Paper picking using omnidirectional tactile feedback in dexterous robotic hands. Robotics: Science and Systems (RSS) 2025. #12 scholar

- Cho, W., et al. (2025). Multi-dimensional tactile sensing for force/noise decoupling. ACS Applied Materials & Interfaces. scholar

- Hossain, M., et al. (2025). Multi-axis tactile for object orientation detection. Sensors and Actuators A: Physical. scholar

- Chen, C., Yu, Z., Choi, H., Cutkosky, M., & Bohg, J. (2025). DexForce: Extracting force-informed actions from kinesthetic demonstrations for dexterous manipulation. IEEE Robotics and Automation Letters. arXiv:2501.10356. #3 scholar